Live content has never been more valuable or more complex.

A single hour of programming might include:

- Multiple camera angles and returns

- Agency and social feeds

- Talent segments, graphics, and promos

- Squeezed breaks and dynamic ad insertion on streaming

What viewers see as a seamless live show is actually a constant flood of signals. Without structure, that flood becomes almost impossible to manage afterward.

That structure is real-time metadata.

For broadcasters and streaming providers, real-time metadata is the layer that makes every second of live content searchable, reusable, and monetizable. At Digital Nirvana, we built MetadataIQ to automate that layer, generating speech-to-text, video intelligence, and time-based tags as content flows through Avid and other PAM/MAM systems.

In this article, we’ll look at what real-time metadata is, how it powers live news, sports, and streaming events, and where a tool like MetadataIQ fits in.

What Do We Mean by “Real-Time Metadata”?

Real-time metadata is information about your live content that’s:

- Generated as the event happens (or near-live), and

- Attached to exact timecodes in your audio/video streams.

Think of it as a running log that answers “What’s happening right now?” in machine-readable form.

Typical examples for broadcast and streaming include:

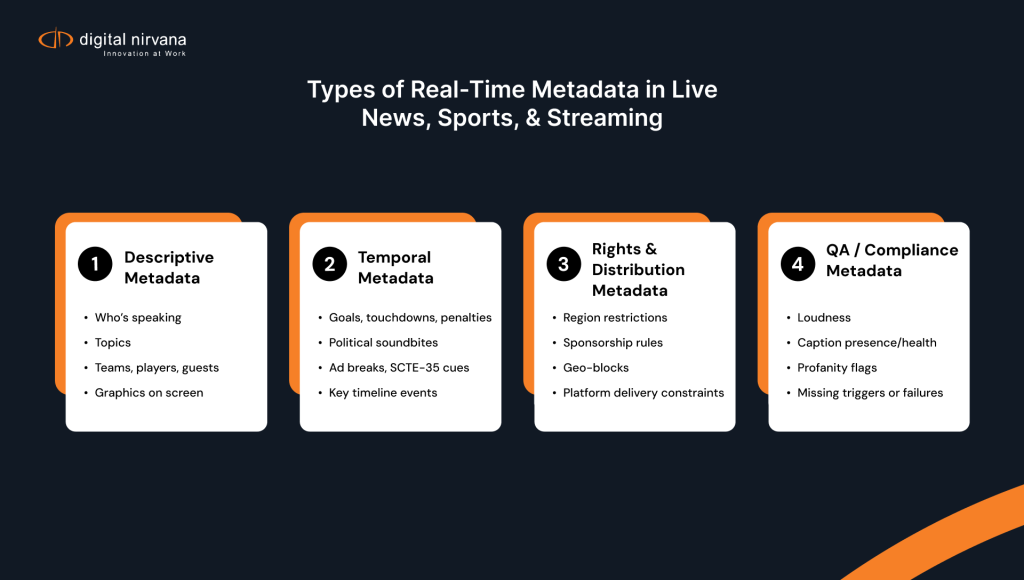

- Descriptive metadata: who’s speaking, which team is playing, what topic is being discussed, what graphic is on screen

- Temporal metadata: time-coded markers for goals, touchdowns, politician soundbites, ad breaks, SCTE-35 cues, and more.

- Rights and distribution metadata: region restrictions, sponsorship relationships, geo-blocks, and platform rules.

- QA/compliance metadata: caption status, loudness checks, profanity flags, missing ad triggers, and other on-air issues

Historically, much of this was logged manually in spreadsheets or simple logging tools. Today, AI and ML enable platforms like MetadataIQ to generate and attach this metadata automatically at scale.

Why Live News Runs on Real-Time Metadata

1. Faster Decisions in the Control Room

Live news teams juggle dozens of feeds, agency lines, remote contributors, social clips, and archived segments. Real-time metadata helps them:

- See who is talking about what across multiple inputs

- Identify segments with specific topics or keywords (e.g., “wildfire,” “election result”)

- Jump to the right moment without scrubbing through full feeds

News-specific metadata workflows are now standard in modern NRCS and PAM/MAM stacks. MetadataIQ, for example, uses news-focused speech-to-text and ML models to quickly describe incoming feeds, then time-indexes that metadata alongside media in Avid MediaCentral so producers can search and drop relevant segments into playlists faster.

2. Searchable Archives in Minutes, Not Days

Breaking stories often require historical context: past interviews, earlier coverage, or related explainer pieces. When real-time metadata is written back to the archive:

- Archive producers can search by person, topic, location, or program

- Clips with matching metadata surface instantly

- Follow-up packages and explainer segments can be built while the story is still evolving

Because MetadataIQ indexes transcripts and video intelligence as time-coded markers, the search happens within Avid, not in a separate system editors don’t want to use.

3. Stronger Compliance and Fact-Checking

Real-time metadata underpins post-broadcast accountability:

- Transcripts provide a searchable record of what was said and when

- Metadata ties specific comments to on-screen graphics, lower thirds, and visuals

- Compliance teams can quickly locate questionable segments and create airchecks for regulators or complainants.

Digital Nirvana’s monitoring and compliance stack (e.g., MonitorIQ) uses this combination of captured content and metadata to give engineering and legal teams a living, searchable record of every second that went to air.

How Real-Time Metadata Transforms Live Sports

Live sports is where real-time metadata becomes almost “mission-critical.”

1. Tagging the Game as It Happens

During a match, real-time metadata can include:

- Plays and events: goals, penalties, substitutions, timeouts

- Participants: player IDs, coaches, referees

- Context: competition, round, venue, weather

- Sponsorship: brand logos, virtual ads, sponsored segments, ad pod boundaries.

A mix of human loggers and AI can log this information. Digital Nirvana’s own sports metadata guidance explains how live sports data and SCTE-35 cues help track plays and ad breaks, enabling broadcasters to create more content while protecting rights.

2. Instant Highlights and Multi-Platform Publishing

Real-time metadata drives:

- Automatic highlight suggestions (e.g., “show me all goals,” “all third-down conversions”)

- Faster manual highlight cutting, since editors can jump straight to tagged events

- Versioning for different platforms (linear, OTT, social) using metadata-driven templates,

When MetadataIQ feeds time-coded tags (speech + video intelligence) into Avid, sports editors don’t have to remember the entire match; they search for a player name, sponsor, or key phrase and cut around those markers.

3. Deeper Engagement and Personalization

Real-time metadata can also drive downstream consumer experiences:

- Dynamic replays and multi-angle highlights in apps

- Personalised playlists (“all your team’s goals,” “mic’d-up moments”)

- Better recommendations and thumbnails based on tagged content

The industry is rapidly moving toward workflows in which AI generates far more metadata than manual logging ever could, enabling new viewer products and sponsorship models.

Streaming Events: Real-Time Metadata Beyond Linear

For streaming-first events, concerts, esports, live commerce, and conferences, real-time metadata is just as important, but the focus shifts slightly.

1. Dynamic Ad Insertion and Monetization

On streaming, metadata drives server-side ad insertion (SSAI) and ad decisioning. Standards efforts, such as IAB Tech Lab’s Live Event Ad Playbook and concurrent streams APIs, lean heavily on real-time viewer and event metadata to avoid missed ad opportunities and maximize yield during high-traffic events.

Combine that with SCTE-35 cue validation and ad-related metadata in tools like MetadataIQ, and broadcasters can:

- Validate that ad markers fire correctly.

- Prove that campaigns run in the proper breaks.

- Reconcile logs across linear and streaming platforms.

2. Quality of Experience and Real-Time Monitoring

Real-time metadata also powers operational monitoring:

- Bitrate changes and error codes

- Caption status, audio channel presence, loudness

- Player-side events (join/leave, buffering)

Digital Nirvana’s broadcast monitoring guidance describes how digital broadcast monitoring systems pair signal checks with searchable, time-stamped logs so engineers can detect and fix issues in minutes, not after social media explodes.

3. Searchable Live-to-VOD

Finally, metadata captured during the live stream becomes the index for the VOD version:

- Chapters for key segments or sessions

- Searchable transcripts

- Tagged speakers, sponsors, and topics

That lets streaming platforms quickly turn live events into long-tail VOD assets without redoing the tagging work.

How Real-Time Metadata Flows Through the Workflow

Here’s a simplified view of how real-time metadata typically moves through a modern broadcast/streaming stack and where MetadataIQ slots in:

- Ingest / Capture

- Real-Time Analysis and Tagging

- AI engines generate speech-to-text, face and logo recognition, OCR, shot detection, and more.

- News- or sports-specific models improve accuracy on relevant vocabulary.

- Time-Indexing and Metadata Normalization

- Metadata is tied to frame-accurate timecodes.

- Tags are mapped into schemas (e.g., EBUCore, PBCore, custom JSON) that your systems understand.

- Writing Back to Avid / PAM / MAM

- Tools like MetadataIQ push metadata back as markers, sidecar files, or embedded fields.

- Editors, producers, and archivists see this metadata directly in Media Composer, MediaCentral, and other interfaces.

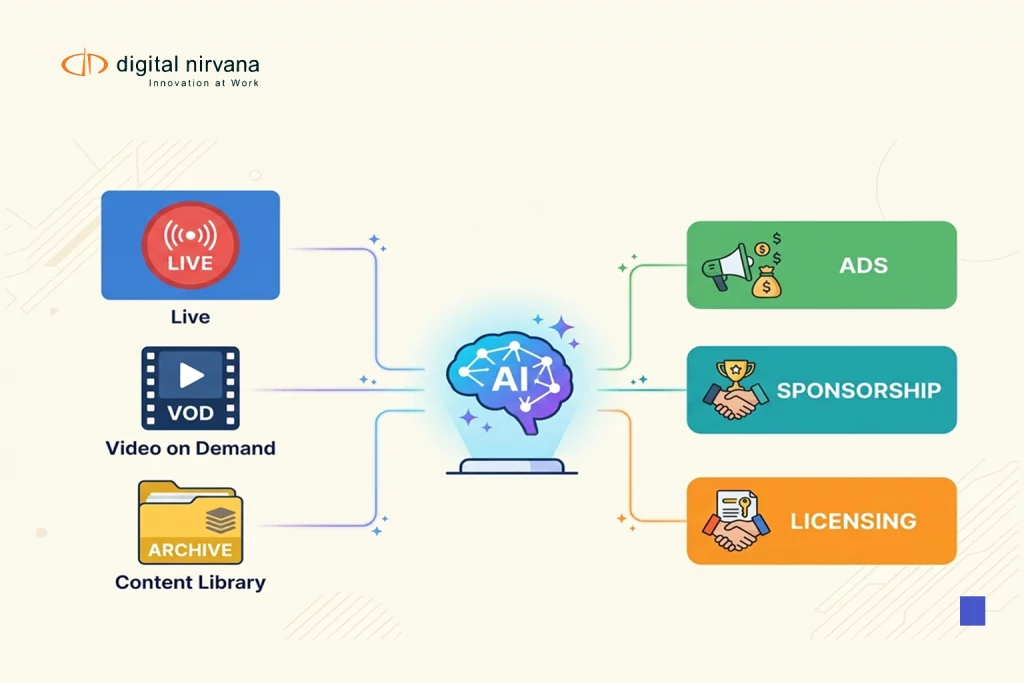

- Downstream Use Cases

- Editorial: faster search and highlights

- Compliance: rapid retrieval and proof of performance

- Monetization: ad verification and sponsorship tracking

- Product: personalized playlists and more intelligent recommendations

MetadataIQ was designed to automate this loop for Avid-based environments and, via APIs, for broader PAM/MAM stacks so real-time metadata becomes part of everyday production, not a separate “innovation” project.

Why Digital Nirvana and MetadataIQ Are Aligned with Real-Time Use Cases

Digital Nirvana’s product roadmap is rooted in three realities:

- Live news and sports don’t wait for manual tagging.

Blog posts on AI-driven production metadata and news metadata from Digital Nirvana explicitly highlight the need for real-time, AI-assisted tagging that “does not miss a beat” when events unfold live. - Avid is still the center of many premium workflows.

MetadataIQ is integrated with Avid CTMS APIs and has been demoed by Avid at IBC, showcasing how metadata is generated and surfaced transparently inside MediaCentral for news and sports teams. - Metadata is only valuable if it’s used across the business.

Digital Nirvana’s recent content on metadata solutions and tagging tools stresses that metadata should support editorial, rights, legal, and operations teams simultaneously, not live in separate silos or static catalogs.

In other words, the company is not just trying to label video; it’s trying to make real-time metadata an operational asset.

FAQs: Real-Time Metadata for Live News, Sports, and Streaming

Traditional logging is usually manual and often done after the event. Real-time metadata is:

Generated automatically (often by AI)

Attached to precise time codes as the event happens

Designed to flow back into systems like Avid, PAM, MAM, monitoring, and ad platforms

The result is richer, more consistent metadata that shows up far sooner in your workflows.

No. Real-time metadata tools like MetadataIQ are built to plug into existing systems via APIs and integrations. Avid CTMS support, for example, lets MetadataIQ pull media from Media Composer/MediaCentral and push time-coded markers back without relying on legacy Interplay.

Not at all. While big tentpole events show the most obvious ROI, real-time metadata also helps with:

Regional news bulletins

Niche sports and long-tail events

Live concerts, conferences, and corporate streams

Any format where you’ll want to find, verify, clip, or monetize the content later benefits from better live tagging and search.

Most broadcasters start with a pilot around a single, high-impact use case, such as:

Faster highlight creation for a key sports property

More efficient news package building during a specific daypart

Compliance and QA for a single channel or streaming app

From there, you can expand real-time metadata workflows to additional channels, sports, or events once you’ve measured time saved, errors avoided, and new content produced.