SMPTE Timecode and Metadata: Why Frame-Accurate Indexing is Important for Media Teams?

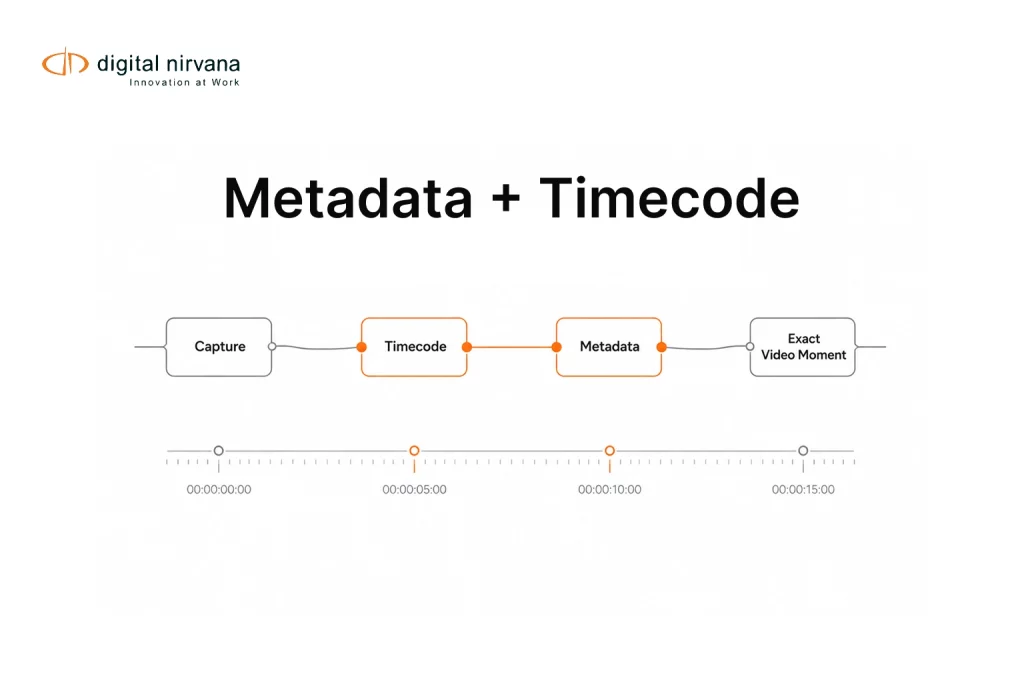

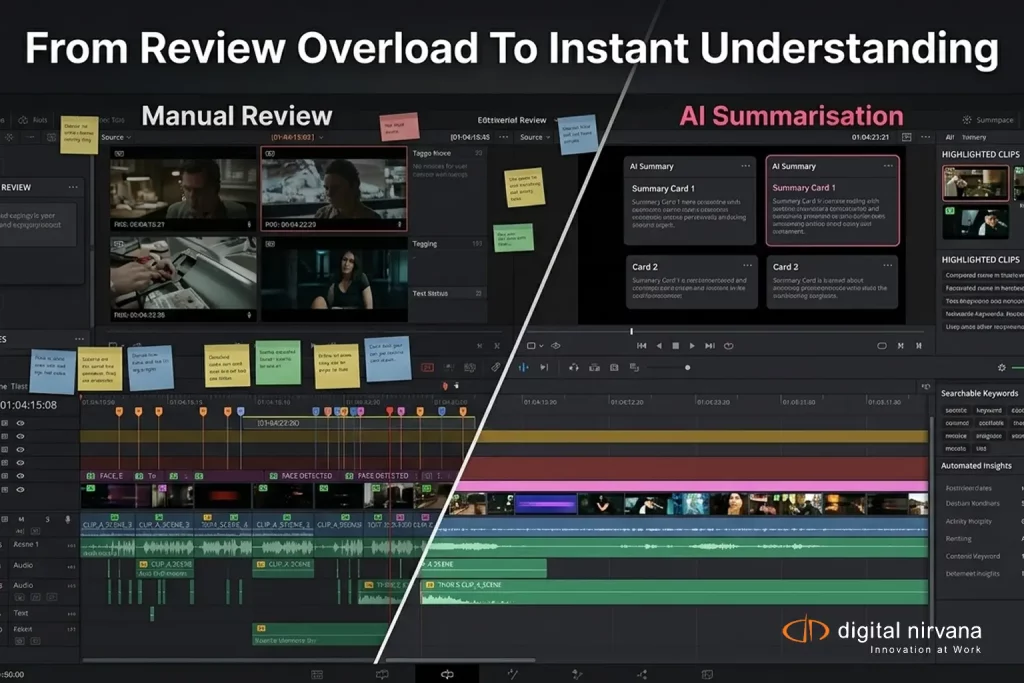

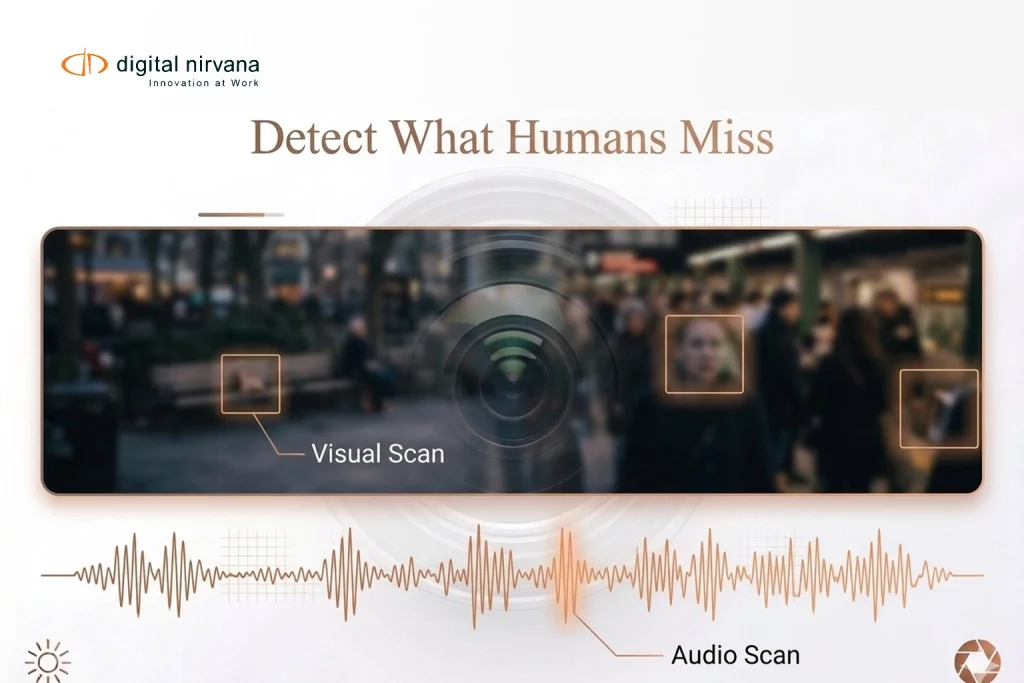

A single missing frame can create hours of confusion in a modern media workflow. Editors struggle to locate the right clip. Producers waste time reviewing footage manually. Compliance teams miss crucial broadcast moments. Archivists deal with incomplete metadata. In fast-moving media environments, even minor indexing gaps can significantly slow production timelines. This is where SMPTE […]

SMPTE Timecode and Metadata: Why Frame-Accurate Indexing is Important for Media Teams? Read More »