One piece of unsuitable content can really harm your brand’s trustworthiness, almost instantly. If it’s using bad language in a conversation, displaying violent images, or having quite sensitive scenes appear in the completely wrong context, your media team is constantly under pressure to ensure that content complies with platform rules and what your audience expects from you.

Handling all the information involved makes this job absolutely gigantic: by 2026, it’s estimated that more than 500 hours of video will be uploaded to major platforms every minute, so manual review just isn’t humanly possible at that scale.

That’s where Digital Nirvana makes a really big difference in the safety landscape. By using our managed AI to detect unsuitable content, organizations can automatically spot and handle problematic content, helping their teams stay compliant and protecting their brand’s reputation.

Why Content Moderation is Necessary in Modern Media?

Media distribution has expanded across broadcast, OTT, and digital platforms. Each platform comes with its own content guidelines and compliance standards. This proactive approach is now a cornerstone of modern media enrichment strategies.

Organizations must ensure that content:

- Meets regulatory requirements

- Aligns with brand values

- Is suitable for target audiences

- Avoids reputational risks

Inappropriate content detection helps teams stay ahead of these requirements by identifying issues early in the workflow.

Operational Hurdles in Manual Content Review

Manual review processes are no longer sustainable for modern, large-scale media operations. Relying solely on human eyes and ears introduces significant risks.

- Overwhelming Content Volume: The sheer scale of daily uploads makes it physically impossible for teams to keep pace without massive overhead.

- Subjectivity & Bias: Human reviewers often interpret sensitive material differently, leading to inconsistent enforcement of platform guidelines.

- Detection Gaps: Fatigue and human error often result in missed instances of profanity, violence, or subtle compliance violations.

- Prolonged Review Cycles: Manual screening creates a “wait-time”, delaying content time-to-market.

- Cross-Team Inconsistency: Maintaining a unified moderation standard across global teams is nearly impossible without automated guardrails.

These limitations ultimately prevent organizations from scaling their content output without compromising quality or risking serious regulatory fines.

What Is Inappropriate Content Detection?

Inappropriate content detection uses AI to identify content that may be considered unsafe, offensive, or unsuitable. This technology provides the data intelligence needed to filter large libraries without the need for human eyes to review frame by frame.

This includes:

- Profanity in speech or subtitles

- Violent scenes or imagery

- Nudity or explicit visuals

- Hate speech or sensitive language

- Contextual risks based on content themes

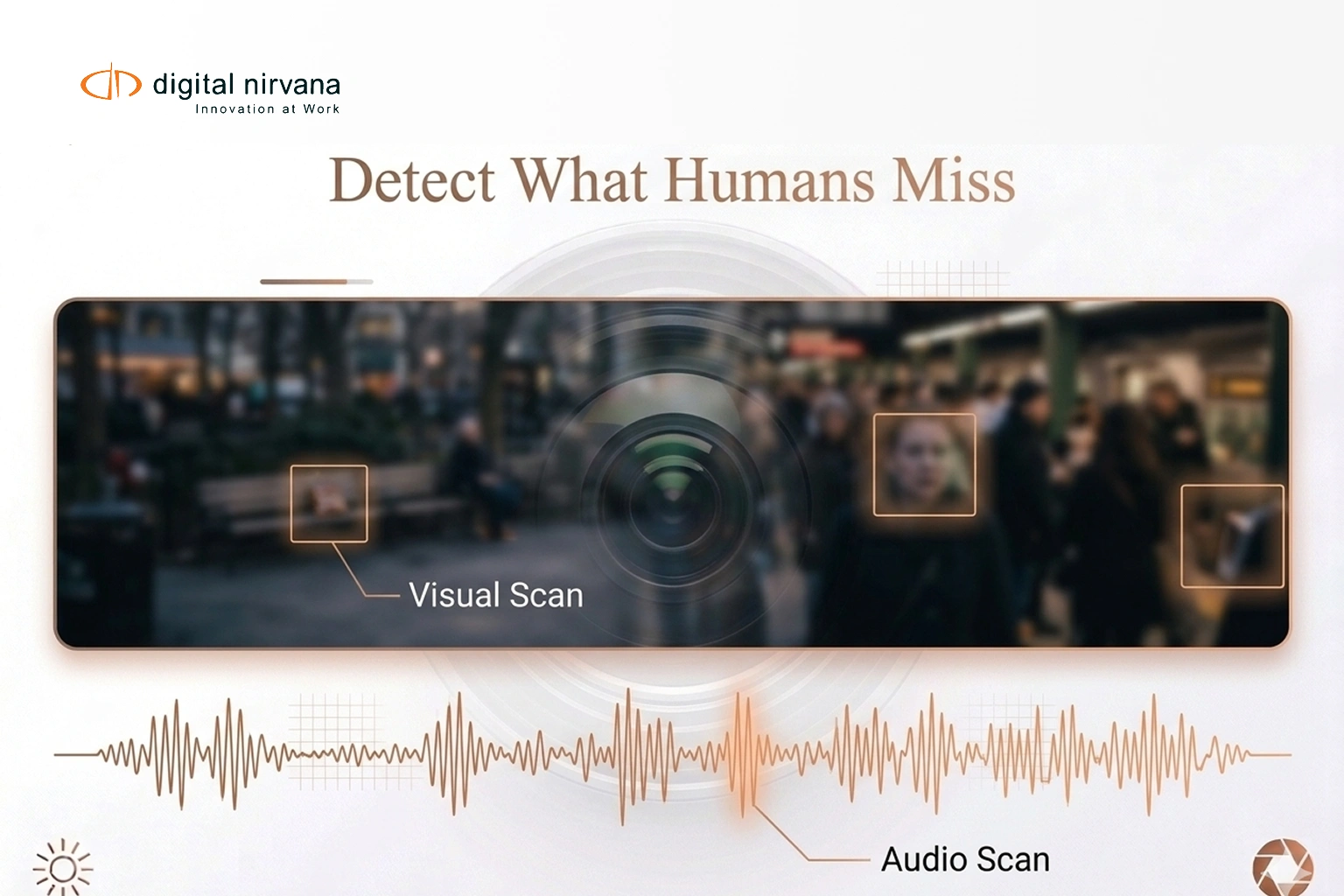

Sensitive content detection systems analyze both audio and visual elements to provide a complete understanding of media content.

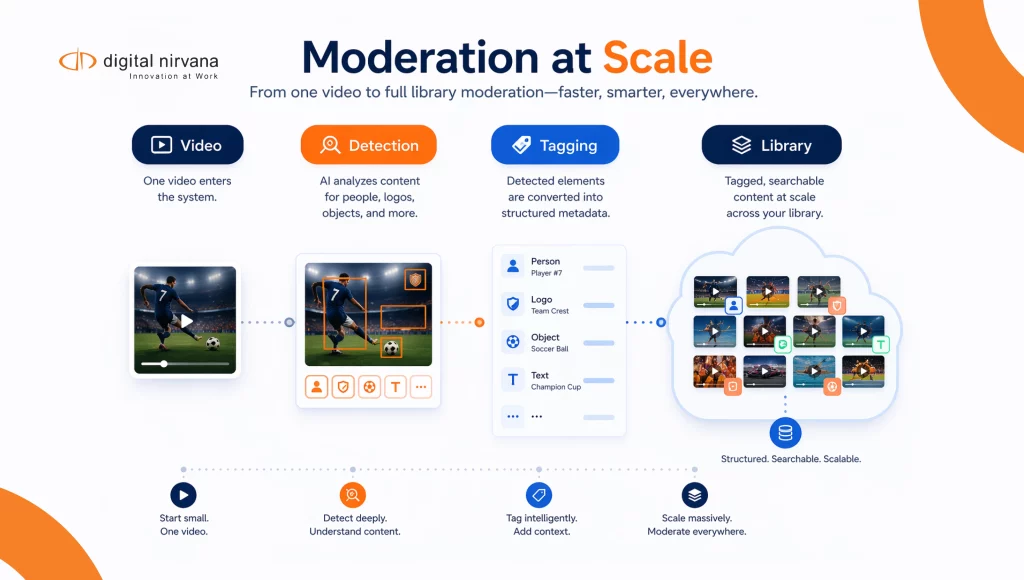

How Sensitive Content Detection Works In Video?

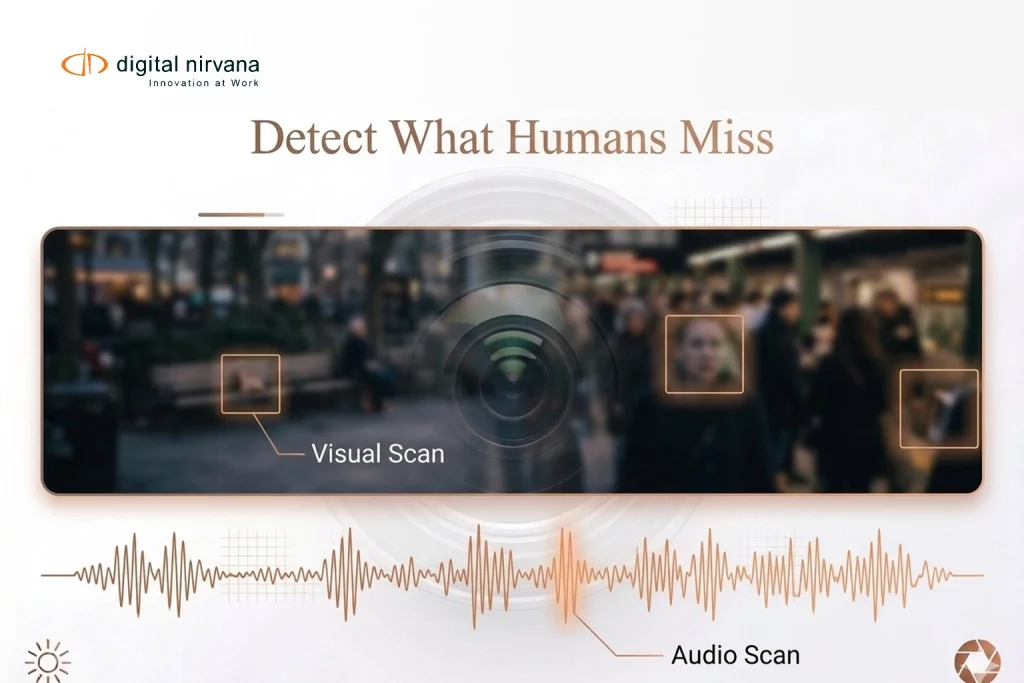

The process combines speech analysis, visual recognition, and contextual classification. In high-stakes environments such as investment research, ensuring that sensitive financial commentary or proprietary data is flagged correctly is crucial for maintaining strict institutional compliance.

The detection process combines multiple technologies:

- Speech Analysis: Audio is transcribed and analyzed to detect profanity or harmful language.

- Visual Recognition: Frames are scanned to identify violent or explicit imagery.

- Contextual Classification: AI models evaluate the overall context of scenes.

- Timecode Tagging: Each detected instance is linked to a precise timestamp.

- Metadata Indexing: Detected content is stored as searchable metadata.

This allows teams to quickly locate and review flagged segments.

How Managed AI Helps with Detection?

Digital Nirvana integrates these safety layers into a broader workflow through managed AI. This platform allows organizations to search and filter content by risk categories, supported by enterprise-grade cloud engineering to handle the processing power required for real-time detection.

This enables organizations to:

- Automatically detect profanity, violence, and sensitive content.

- Tag flagged segments with precise time codes.

- Combine detection with transcription, OCR, and logo tracking.

- Search and filter content based on risk categories.

- Scale review processes across large media libraries.

This approach ensures that content moderation is both efficient and consistent.

Operational Uses Within Broadcast and OTT Workflows

Utilizing detection within learning management systems to ensure student-facing content remains age-appropriate and professional.

- Broadcast Compliance

Broadcasters really have to follow very strict legal rules that vary by country. Automated systems will scan entire collections of work to ensure your content complies with local age restrictions and the ‘watershed’ laws. This whole process instantly flags anything you’re not supposed to show, such as excessive violence, profanity, or forbidden symbols, to avoid expensive legal penalties and even losing your broadcast license before your content ever gets on the airwaves.

- OTT Platform Moderation

With thousands of hours of user-generated content created and licensed every day, a manual review of every item just isn’t possible for streaming services. Automated software will instantly filter and categorize the content into different levels of sensitivity. This lets platforms create ‘Safe Search’ filters, automatically generate warning labels, and ensure viewers are only shown content that fits their profile settings.

- Brand Safety Monitoring

Advertisers are very careful about where their ads appear. Brand safety tools really analyze what you see and hear around where an ad is going to be shown, to ensure it’s not placed next to content that’s not safe for work, extreme politics, or terrible news stories. This helps protect a company’s reputation by ensuring its brand is linked only to positive, high-quality environments.

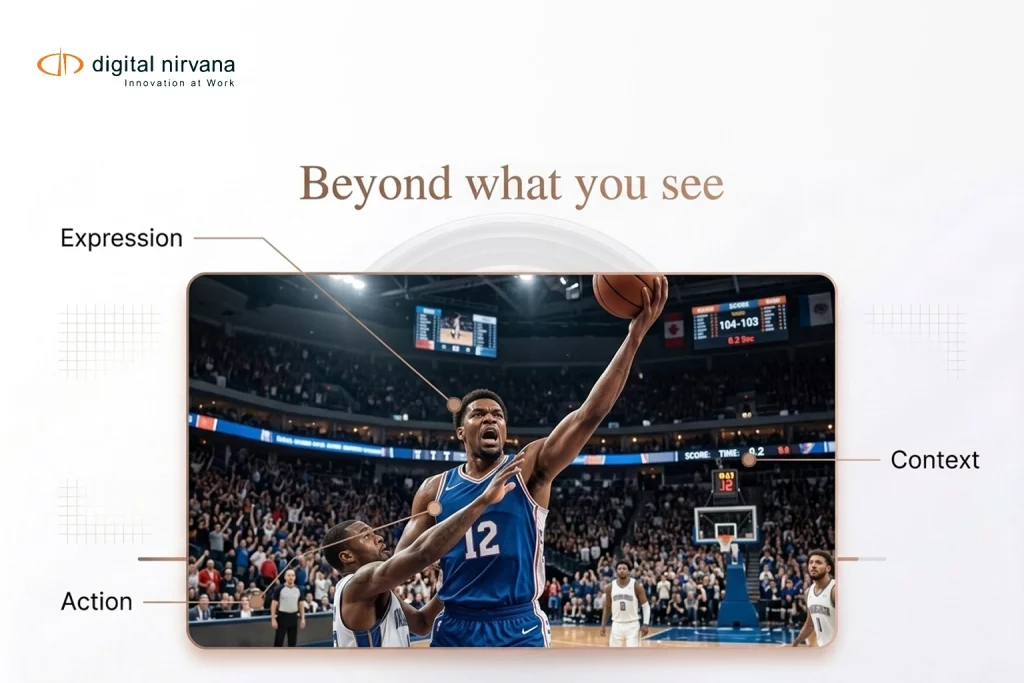

- Content Review And Editing

Post-production teams sometimes really have to find one particular scene in a huge, multi-hour, unedited version; that’s a problem. AI-driven review tools give a ‘heatmap’ of the most concerning parts. Rather than having to start at the beginning and watch all the way to the end, editors can go directly to the exact times they need to blur a logo, edit out a word, or remove a scene, greatly reducing the time to final delivery.

Strategic ROI of Automated Content Detection

Automated content detection delivers measurable strategic ROI by combining multimodal intelligence with scalable compliance workflows.

- Multimodal Safety Scans: Simultaneously analyzes speech for profanity and frames for violent or explicit imagery to provide total content awareness.

- Contextual AI Logic: Goes beyond simple keyword matching to assess a scene’s overall theme and context, reducing false positives.

- Frame-Accurate Flagging: Automatically tags risky segments with precise timestamps, allowing editors to jump directly to sections requiring review.

- Scalable Compliance: Manages massive daily upload volumes, ensuring that every asset complies with regional “watershed” laws and age restrictions.

- Integrated Moderation Hub: Feeds detection data directly, combining safety alerts with OCR and transcription for a unified review workflow.

The result is a smarter compliance ecosystem, one that reduces operational costs, accelerates turnaround times, and strengthens trust across broadcast, OTT, and digital content networks.

Digital Nirvana’s Inappropriate Content Detection Technology

Digital Nirvana’s inappropriate content detection acts as an automated safety shield for your media, instantly scanning audio and video for profanity, violence, and explicit material.

Using managed AI for inappropriate content detection transforms media review from a cost center into a high-speed efficiency engine.

- Accelerated Time-to-Market: Reduces manual review time, allowing content to go live faster without bottlenecks.

- Precision Moderation: Improves accuracy in detecting profanity and violence, eliminating the risks of human fatigue and oversight.

- Global Compliance Standards: Maintains consistent safety guardrails across all channels, ensuring every asset meets regional regulatory demands.

- Brand Equity Protection: Safeguards your reputation by preventing sensitive content from surfacing in inappropriate or public-facing contexts.

- Infinite Scalability: Allows media operations to handle enormous volumes through cloud engineering.

By replacing subjective manual screening with high-speed AI, you eliminate moderation bottlenecks and protect your brand’s reputation across all platforms. This technology ensures your library is consistently compliant and safe for global distribution.

FAQs

It uses AI to identify content that might be quite off-putting, quite unsafe, or totally unsuitable for an audience.

Systems can really pick out profanity, extreme violence, very explicit visual stuff, and all sorts of other rather sensitive material.

Accuracy really depends on the quality of the models and data, but even today’s systems deliver fairly reliable results for many media workflows most of the time.

Managed AI integrates detection with timecode indexing and metadata, making it much simpler to review and manage flagged content.

Yes, it works amazingly well for both your brand-new and archived media libraries.

Conclusion

As media volumes really take off, ensuring your content is safe is getting harder all the time. Manual review methods just aren’t sufficient anymore to handle both the volume and the speed required nowadays.

Inappropriate content detection provides a systematic, automated approach to identifying potential problems, keeping you compliant, and protecting your brand image wherever you exist online. Digital Nirvana provides the best AI-driven tools to transform content moderation from a bottleneck into a smooth, high-speed safety net.

Key Takeaways:

- Inappropriate content detection is important to maintaining brand safety and compliance across broadcast and OTT platforms.

- It automatically identifies risky media elements, such as profanity, violence, or explicit imagery, through advanced audio and visual analysis.

- This dual-layered approach ensures that both spoken and visual content are evaluated for sensitivity, enabling comprehensive moderation.

- Each flagged segment is marked with precise timecode, allowing editors to jump directly to the sections that require review rather than manually scanning entire files.

- Integrated within Managed AI, these detection capabilities form a unified workflow that connects safety alerts, transcription, and OCR data for verification.

- The result is a smarter, faster, and more reliable compliance process that enhances operational efficiency, protects brand reputation, and ensures content meets global standards for appropriateness and audience trust.