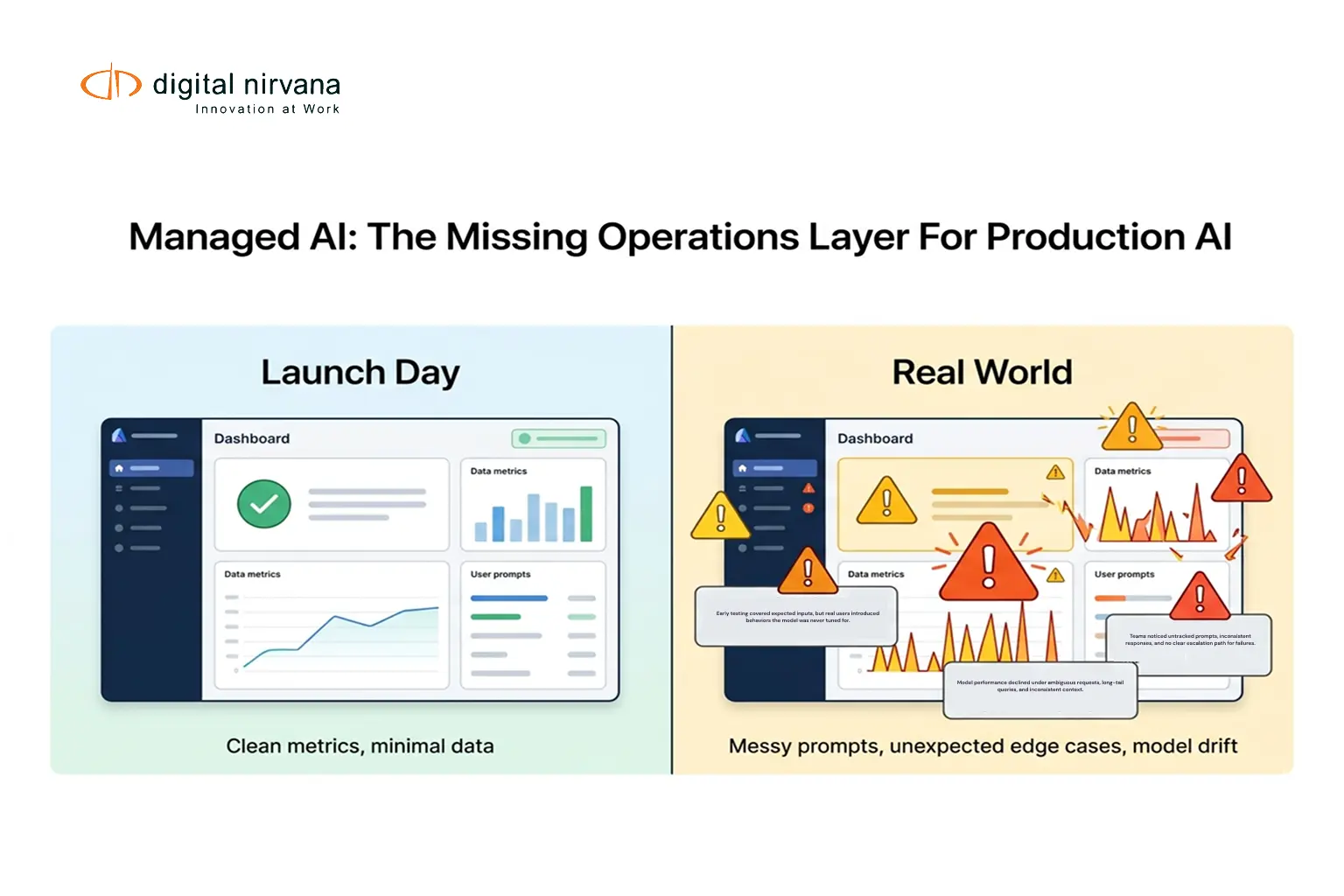

Your AI worked perfectly in testing. Then real users arrived, edge cases piled up, prompts evolved, and accuracy started slipping in ways dashboards did not fully explain.

That is the gap Managed AI is built to close.

Digital Nirvana’s Managed AI Operational Services provide an operational layer for enterprise AI, combining continuous monitoring with structured human oversight so models, prompts, data, and outputs stay accurate, safe, relevant, and trustworthy in real business conditions, not just at launch.

Why Production AI Breaks After Launch

In production, AI quality is a moving target. Even if your infrastructure is stable, these issues keep changing:

- Output Drift

Language patterns, relevance, and completeness shift as users, content, and business context evolve. Many teams discover that drift shows up as “it feels worse” before it shows up in a simple metric. - Prompt Drift And Prompt Decay

Prompts are operational assets. Small edits can cause big side effects, and regressions are easy to miss without structured testing. - Hallucinations And Policy Risk

Automated monitoring helps, but it often misses semantic issues, subtle inaccuracies, and context failures that humans immediately catch. - Data And Label Quality Issues

If labels are inconsistent or generic, the model improves in the wrong direction. Domain nuance matters, especially in regulated or customer-facing workflows.

The takeaway is simple. AI in production must be actively operated, not just deployed.

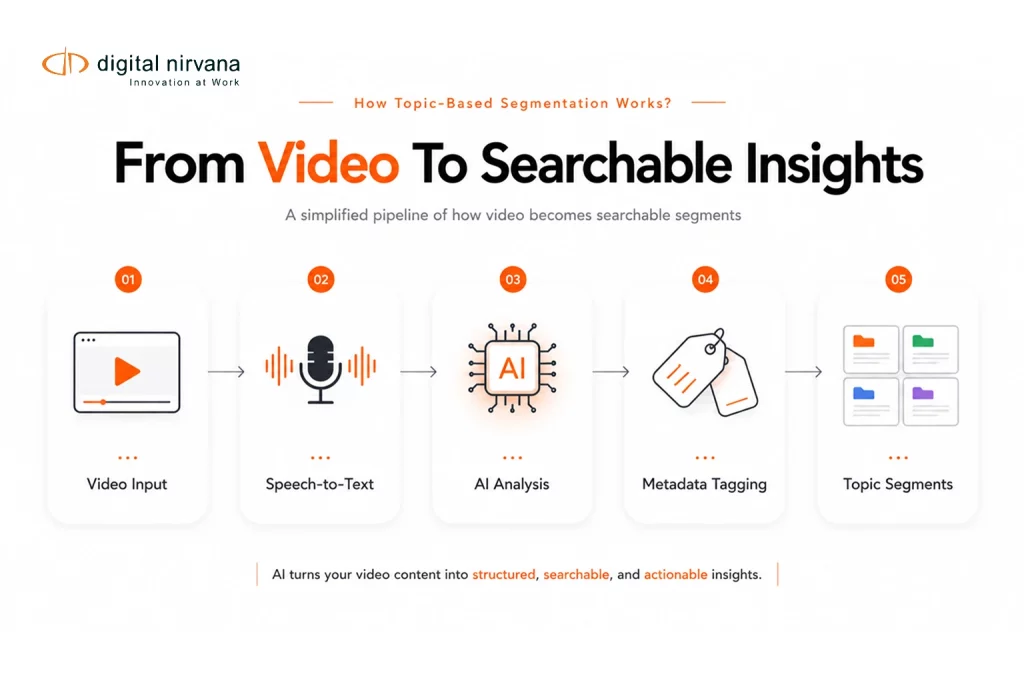

What Managed AI Operational Services Actually Do

Managed AI is a continuous operating model for AI quality, safety, and governance.

Instead of only measuring system health, it continuously evaluates real outputs, stress-tests prompts, improves domain datasets, and routes high-risk or low-confidence items through human review. The result is proactive detection, prioritized remediation, and verified improvements through closed-loop cycles.

This approach matches what Digital Nirvana already emphasizes in other AI-heavy workflows: governance, thresholds, human review queues, and audit trails that keep automated systems reliable.

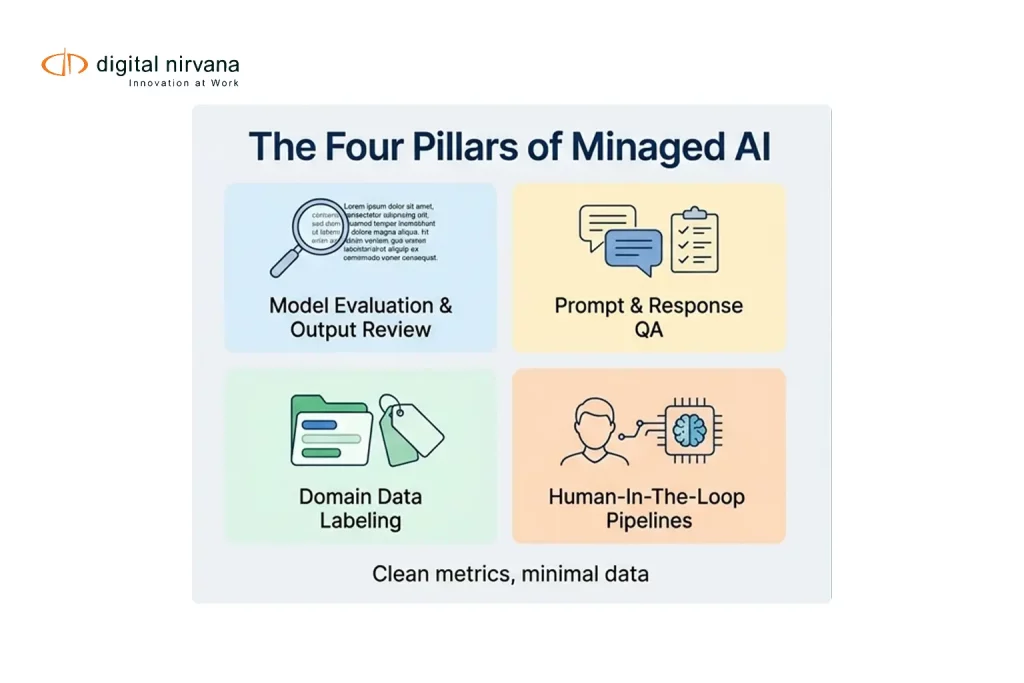

The Four Pillars Of Managed AI

Model Evaluation And Output Review

What it is

Continuous review of real production outputs using structured sampling and expert validation to detect accuracy issues, hallucinations, bias, unsafe content, and performance drift early.

What it prevents

Quiet quality degradation, brittle behavior changes, and “we did not see it coming” failures that appear only after user impact.

Prompt And Response Quality Assurance

What it is

Ongoing prompt evaluation, stress testing, and regression testing so prompts and responses stay accurate, safe, on-tone, and aligned to business intent as prompts evolve.

What it prevents

Prompt regressions that alter meaning, break policy boundaries, or change tone and reliability over time.

Domain-Aware Data Labeling

What it is

Expert-led annotation using business-specific taxonomies and guidelines, plus multi-pass QA for label consistency.

What it improves

Training data quality, evaluation coverage, and targeted improvement of recurring error patterns through better ground truth.

Human-In-The-Loop Review Pipelines

What it is

Structured workflows that route low-confidence, novel, or high-impact outputs to trained reviewers, then feed decisions back into models, prompts, and policies.

What it enables

Accountability where it matters, while reducing future review volume through closed-loop learning.

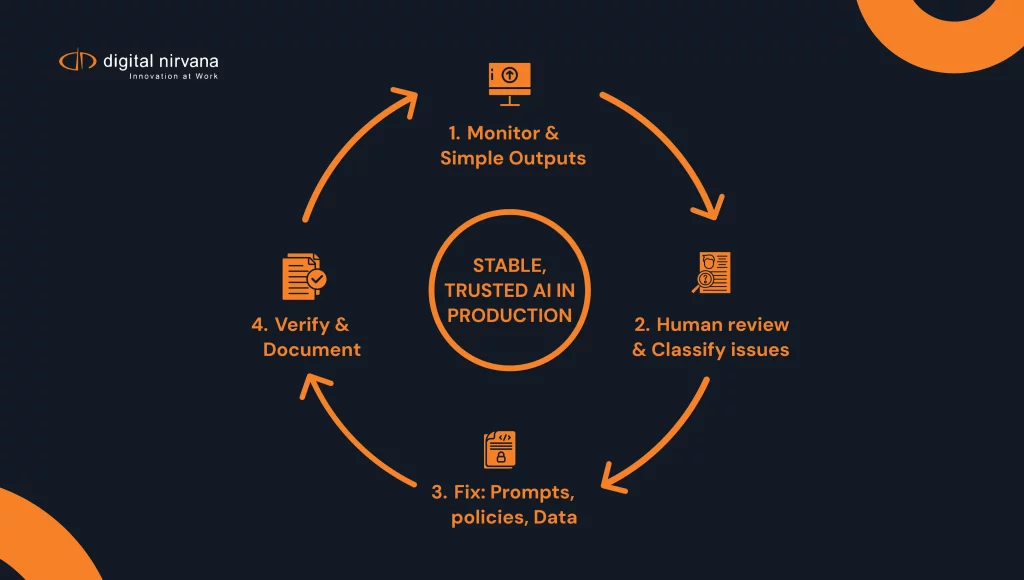

How Managed AI Works Day To Day

A production-ready Managed AI program typically runs as a steady operational cycle:

- Continuous Sampling And Monitoring

Risk-based sampling plus trend monitoring across key quality dimensions, including accuracy, safety, relevance, and business alignment. - Human Review Where It Adds The Most Value

Experts validate outputs that are high-risk, low-confidence, or business-critical, and classify issues by severity and impact. - Actionable Remediation

Findings turn into prioritized fix queues, prompt changes, policy adjustments, and data labeling needs. - Verification After Fixes

Changes are re-tested so improvements are confirmed, not assumed. - Audit-Ready Documentation

Decisions, changes, and outcomes are documented so you can show what changed, why it changed, and how it performed after the change. This is consistent with Digital Nirvana’s governance-first approach in other AI workflows.

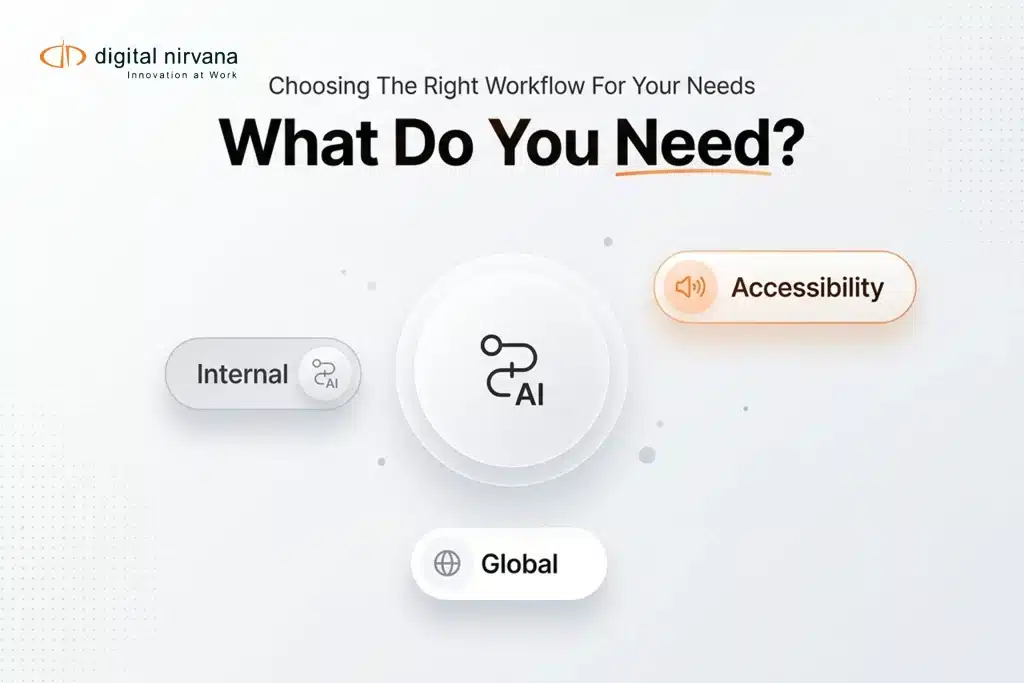

Who Should Use Managed AI Operations And Governance

Managed AI is designed for organizations running AI in production where quality and accountability are non-negotiable:

- Enterprises scaling GenAI and ML across teams and use cases

- Regulated industries that require explainability and oversight

- Customer-facing workflows where errors impact revenue, trust, or compliance

- Teams that already have monitoring tools, but still see quality surprises in the real world

What You Get: Outcomes That Matter In Production

When Managed AI is done well, the outcomes are practical:

- Sustained accuracy and consistency over time

- Faster detection of drift, hallucinations, and policy risk

- Prompts that stay stable through structured QA and regression testing

- Better datasets through domain-aware labeling and clearer taxonomies

- Human accountability for high-risk decisions through HITL routing

- Clear reporting on operational health, improvement progress, and remaining risk

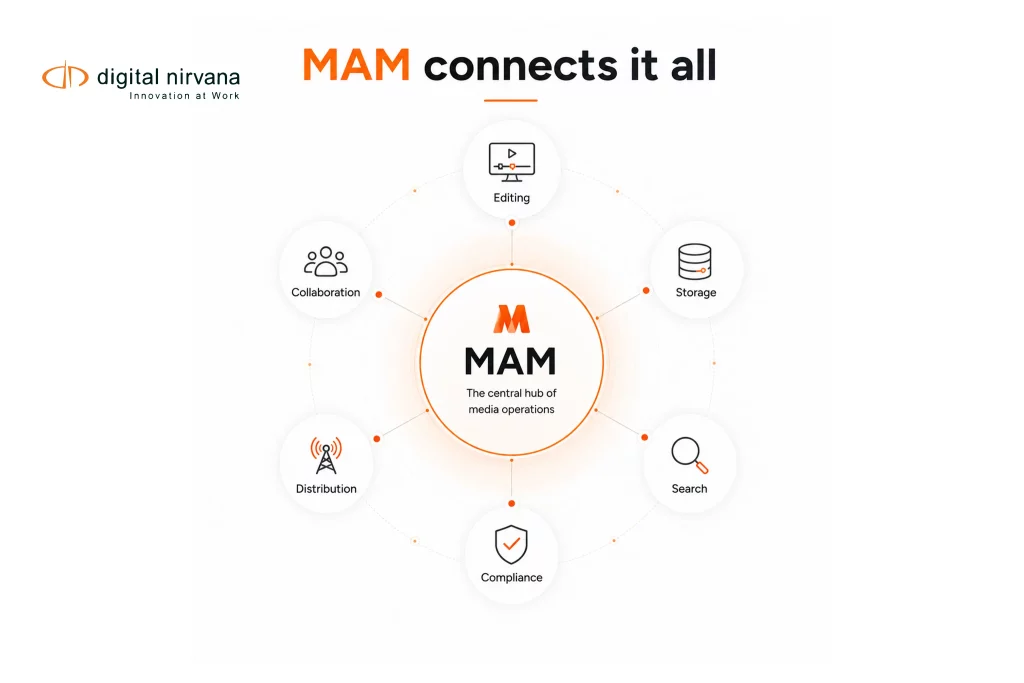

How This Connects To Digital Nirvana’s Operational DNA

Digital Nirvana has long operated quality-critical, real-time workflows where human oversight is built into delivery, including multi-stage review and post-delivery audits in transcription and captioning operations.

Managed AI applies the same operational rigor to modern enterprise AI systems: structured review, measurable quality controls, closed-loop improvement, and governance that goes beyond dashboards.

Meet Digital Nirvana At NAB Show 2026

If you’re attending NAB Show 2026, meet Digital Nirvana at Booth N1555 in Las Vegas, April 19–22, 2026. While MetadataIQ is the core showcase, it is also a good place to talk about the operational reality of running AI in production, including governance, review workflows, and how teams keep accuracy stable over time.

FAQs

Managed AI is an operational service layer that keeps AI reliable in production through continuous evaluation, prompt QA, domain-aware data labeling, and human-in-the-loop review pipelines.

Monitoring tools are necessary, but they often focus on system metrics and automated checks. Managed AI adds structured human review, regression testing, and closed-loop remediation to catch and fix subtle accuracy and relevance issues earlier.

Any time prompts change, and on a regular schedule for high-impact workflows. GenAI QA guidance commonly recommends stress testing, regression suites, and comparison against prior outputs to catch unintended side effects.

When outputs are low-confidence, high-impact, policy-sensitive, or novel. HITL frameworks are typically used to balance automation with accountability for the decisions that matter most.

Conclusion

Deploying AI is the start. Operating AI is the real work, especially when quality, safety, relevance, and trust must hold steady through changing users, data, and business conditions.

Key Takeaways:

- Production AI needs continuous evaluation of real outputs, not just launch testing.

- Prompts require QA, stress testing, and regression checks to prevent prompt drift and unintended behavior changes.

- Domain-aware labeling improves training and evaluation data where generic labels fail.

- Human-in-the-loop pipelines add accountability for high-risk and low-confidence decisions, while feeding improvements back into the system.

- Managed AI works best as a closed-loop operating cycle with verified fixes and audit-ready documentation.