The increasing use of mobile devices has resulted in video being the most powerful way to reach the targeted audience. Cementing this fact is the study done by Cisco that states, by 2023 video will make up for more than 82% of all consumer internet traffic. Adding further to the growth of videos for storytelling, another study suggests that 80% of people are more likely to watch videos when captions are available.

Captions are the most critical factor determining video accessibility, as they make video accessible to viewers who don’t understand the language and who are deaf and hard-of-hearing. Now, captions are often interchangeably referred to as closed captions, open captions, and subtitles. Before we move forward, let’s understand the differences between all of these.

Subtitles translate the spoken audio into a language that the user understands. They assume that the user is not deaf or hard-of-hearing and doesn’t include the non-speech-related elements.

Open captions and closed captions are more than often serving the same goal. Both open and closed captions are developed specifically for the deaf and hard-of-hearing people. Apart from the textual version of spoken language, they both contain non-speech-related non-speech elements such as sound effects that are important to understand the video. However, there is one major difference between open and closed captions; open captions are burned into the video and cannot be turned on or off. Closed captions, on the other hand, are published as a sidecar. They can be turned on or off and are identified by [CC] symbol.

Why are closed captions important?

A WHO study found that by 2050 nearly 2.5 billion people will have some degree of hearing loss, and closed captions are the only way to make video content accessible to them. Closed captions just not include the text version of the speech, it also includes the non-speech related elements such as sound effects which are important to understand the video. Adding closed captions to videos increases the video’s accessibility, improves user experience and average watch time, and improves SEO.

However, closed captions were not part of Tv shows or movies until the 1970s when closed captions were used for the first time at a conference for the hearing impaired in Nashville, Tennessee, in 1971. This was followed by a second demonstration in 1972 at Gallaudet University, where the National Bureau of Standards and ABC showcased closed captioning embedded in a broadcast of The Mod Squad. By 1979, National Captioning Institute was founded, and in 1982, the institute developed a process of real-time captioning to enable captions in live broadcasting. National Captioning Institute helped American television to begin full-scale use of closed captions.

Masterpiece Theatre on PBS and Disney’s Wonderful World: Son of Flubber on NBC were the first programs to be seen with closed captioning.

Captioning Laws & Guidelines

In the early 90s, the Television Decoder Circuitry Act of 1990 bill was passed by U.S. Congress, allowing Federal Communications Commission (FCC) to put the rules for implementing closed captioning. The Television Decoder Circuitry Act was a big step in enabling equal opportunity for those with hearing impairments as it was passed the same year as Americans with Disabilities Act (ADA).

FCC Guidelines

The FCC adopted rules mandating closed captioning of video programming in 1998, establishing benchmarks for a phase‐in of captioning over the years that followed. They established closed captions as the critical link to news, entertainment, and information for individuals who are deaf or hard-of-hearing. Congress requires video programming distributors (VPDs) – cable operators, broadcasters, satellite distributors, and other multi-channel video programming distributors – to caption their TV programs.

FCC rules for TV closed captioning ensure that deaf and hard of hearing viewers have full access to programming, address captioning quality, and provide guidance to video programming distributors and programmers. The rules apply to all television programming with captions, requiring that captions be:

The rules distinguish between pre-recorded, live, and near-live programming and explain how the standards apply to each type of programming, recognizing the more significant hurdles involved with captioning live and near-live programming.

DCMP Guidelines

The Described and Captioned Media Program (DCMP) is the nation’s leading source of accessible educational videos. DCMP is funded by Depart of Education (ED). nDCMP lays down the following rules for captioning videos:

Open Captions

Modified Closed Captions

Presentation Rate

DCMP’s closed captions quality guidelines are similar to those specified by FCC in 2014. The DCMP captioning essential quality guidelines are:

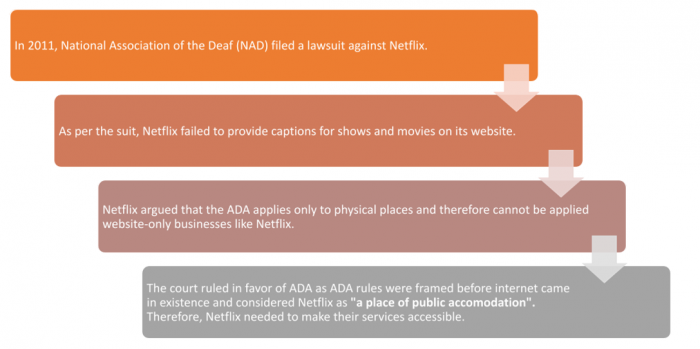

NAD vs. Netflix

21st Century Communications and Video Accessibility Act (CVAA)

The 21st Century Communications and Video Accessibility Act (CVAA) was brought to life on October 8, 2010, by President Obama. The CVAA facilitates the access of persons with disabilities to modern communication by updating the federal communication law. The CVAA is responsible for ensuring that the accessibility laws are on par with the 21st technologies, including new digital, broadband, and mobile innovations. Title II of the CVAA lays out the guidelines for video programming.

Following are the guidelines for closed captions:

Web Content Accessibility Guidelines (WCAG)

Web Content Accessibility Guidelines (WCAG) is developed through World Wide Web Consortium (W3C) in collaboration with individuals and organizations worldwide. The goal of WCAG is to provide a single shared standard for web content accessibility that meets the needs of individuals, organizations, and governments internationally.

WCAG is intended for:

Captioning guidelines as per WCAG 2.0 are:

Perceivable: Content should be presented in different ways, including assistive technologies. Multimedia and text alternatives should support captions.

Operable: The keyboard should be made accessible with an option for inputs from other devices. Viewers should be given enough time to read the captions, and it should not obstruct the video in any way.

Understandable: The text on the screen should be readable. The appearance and operations should be predictable.

Robust: Must adhere to compatibility with current and future user tools.

WCAG also lays guidelines for streaming media content by regulating that all content must include captions and are provided in a format that allows embedding of closed captions.

To know how to generate quick and accurate captions adhering to all the guidelines, visit our website www.digital-nirvana.com or write to us at marketing@digital-nirvana.com.