Hybrid AI dubbing services pair advanced models with human direction so you publish the same video in ten languages within days, not months. Viewers expect native‑sounding voices, consistent terms, and lip‑matched timing even on short social clips. Creators win when they scale quality fast, keep brand tone intact, and control costs with a reliable ai dubbing tool and an expert review bench. Compared with AI-only, a hybrid workflow lifts accuracy, emotional nuance, and localization quality in every market. Global audiences reward that mix with longer watch time, better search reach, and higher conversions.

What Is Hybrid AI Dubbing and How It Works Today

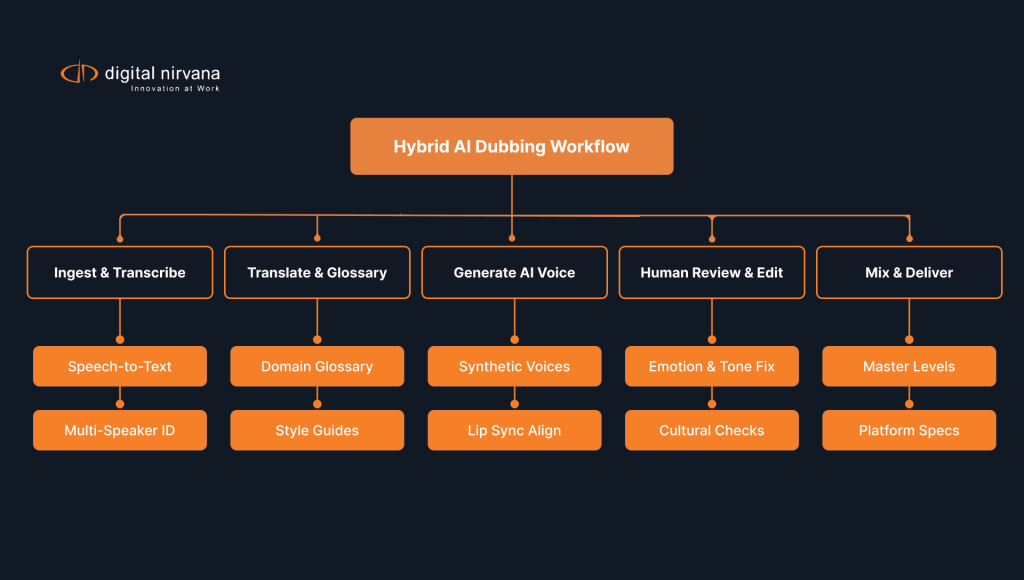

AI dubbing replaces the original speech in a video with new, language‑specific voice tracks that match timing, tone, and context. Teams feed the source audio to speech‑to‑text, run neural machine translation, and generate voices with advanced text‑to‑speech. Editors align the new takes to on‑screen mouth movements and scene beats. Linguists review phrasing, actors refine emotion, and engineers master levels for each platform. This pipeline keeps creative intent intact while the content travels to new markets at scale. A hybrid approach outperforms AI-only by improving accuracy, emotional nuance, and localization quality.

What Makes Hybrid AI Dubbing Different from Traditional Voiceovers

Traditional voiceovers rely entirely on human talent for translation, performance, and timing. Hybrid AI dubbing injects automation into transcription, translation, and voice generation while directors and actors steer tone, timing, and cultural fit. Editors guide pacing and intent so the track feels native and credible. Teams ship more languages per budget because AI handles repetitive steps while humans tackle nuance. Audiences benefit from faster releases and a viewing experience that still reflects the original style.

Core workflow contrasts

- Human‑only voiceover uses manual transcription, manual translation, and live sessions for every line.

- AI‑assisted dubbing automates transcription and first‑pass translation, then generates voice candidates for review.

- Directors craft final emotion and emphasis to reflect character arcs and scene intent.

- Reviewers run cultural checks to avoid literal but awkward phrasing.

- Engineers finish with platform‑specific loudness and mix targets.

What Role AI Voice Cloning Plays in Localization

Voice cloning creates a synthetic version of a speaker’s voice that can deliver lines in new languages. Creators keep brand identity stable across regions because the cloned voice carries familiar timbre and cadence. Legal and ethical guardrails matter, so teams gather clear consent, follow usage terms, and store training data securely. Producers adjust pitch, pace, and articulation to land a natural read for each market. With responsible use, cloning speeds global versions while the creative team stays in control.

Practical voice cloning tips

- Secure explicit, written consent from talent before you record training data.

- Keep training clips clean, labeled, and free of background noise.

- Calibrate target emotion per scene to avoid flat reads.

- Test pronunciation dictionaries for names, brands, and industry jargon.

- Log every cloned deployment for legal clarity and future audits.

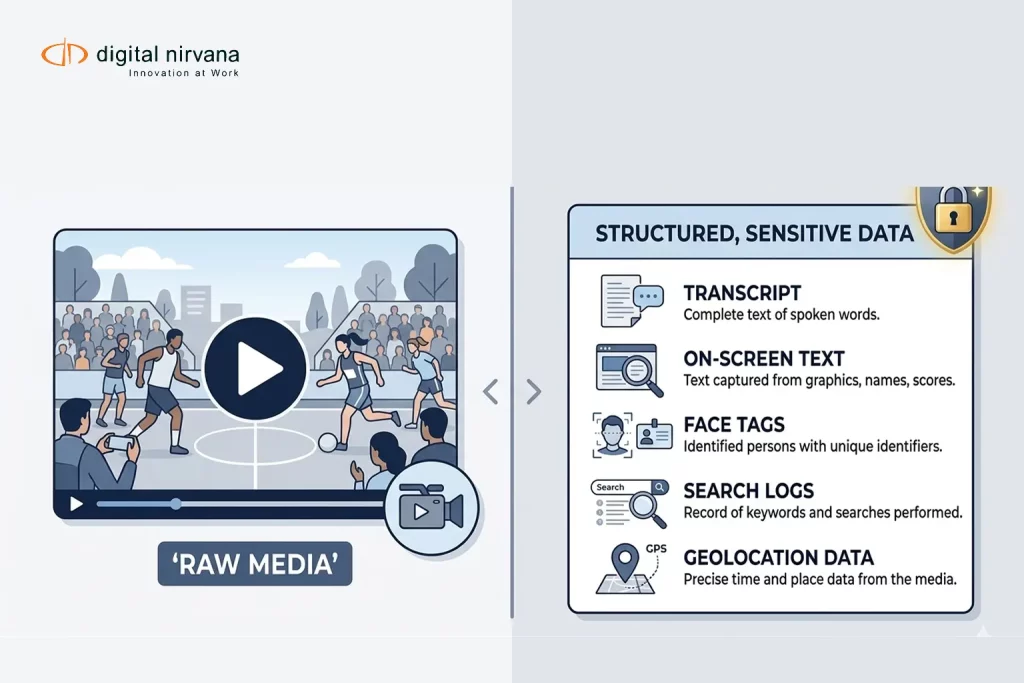

What Tools Support a Hybrid Dubbing Workflow

Modern stacks include speech‑to‑text, translation, TTS voices, and alignment software. Content teams combine best‑in‑class engines for each step, then route files through a central project hub. Editors rely on timing tools to nudge syllables into sync with lip shapes. Linguists plug glossaries into translation to protect product names and technical terms. Producers track every version with strict naming and change control, so nothing slips between edits. Many teams anchor this stack with TranceIQ for captions and translation and MetadataIQ for searchable, governed metadata that speeds review and retakes.

Feature checklist for your stack

- Accurate diarization for multiple speakers and cross‑talk moments.

- Domain‑tuned translation with glossary and style controls.

- Emotion‑aware TTS that supports pauses, emphasis, and SSML.

- Phoneme‑level alignment for tighter lip sync.

- Mix presets for YouTube, OTT, broadcast, and social.

How Digital Nirvana Supports Hybrid AI Dubbing

Our services at Digital Nirvana combine AI speed with human quality control so your dubbed video lands with context and clarity. The Subs and Dubs service brings AI voices and human review into one streamlined workflow for fast, natural results. Editors pair TranceIQ for transcription, captions, and translation with MetadataIQ for terminology control, which keeps voice tracks consistent across episodes and markets. Compliance and quality teams rely on MonitorIQ to verify delivery standards and keep detailed audit trails. This hybrid approach saves time and cost while protecting creative intent and brand voice.

What’s Driving the Shift to Hybrid AI Dubbing Services

Studios, streamers, and brands want faster releases, broader reach, and steady quality. Hybrid AI dubbing services blend automation with human craft, which hits those goals at once. AI software for dubbing takes care of repetitive tasks and scale, while directors, linguists, and voice actors steer tone and cultural choices. Budgets stretch further without gutting the creative result. Teams then ship day‑and‑date launches across languages and keep momentum across seasons. For deeper context on monitoring and compliance that support this shift, see our blog on a digital broadcast monitoring system and our guide to AI metadata tagging for searchability.

What Hybrid AI Means in the Dubbing Workflow

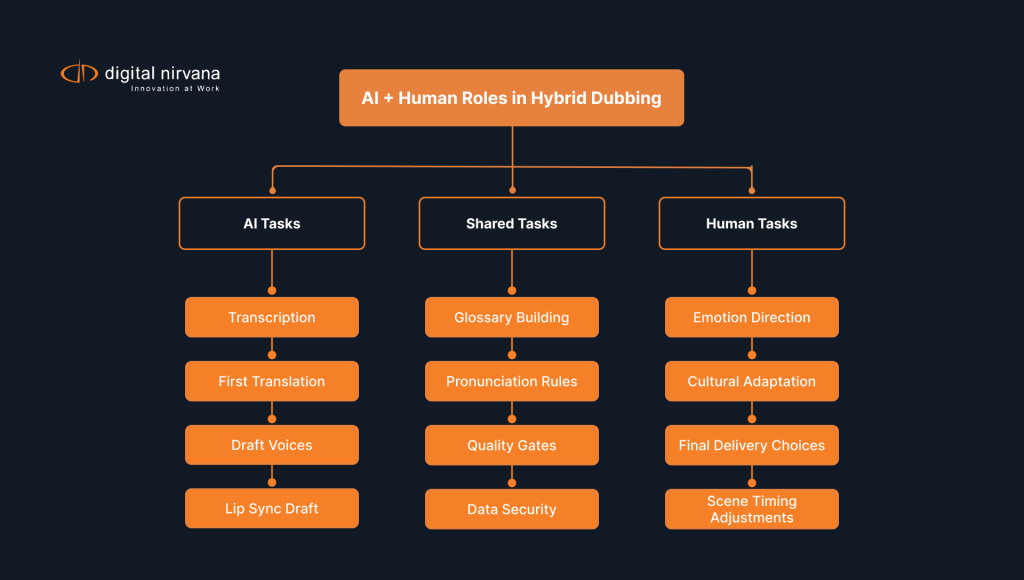

Hybrid means AI accelerates the pipeline, and people keep creative authority. Speech‑to‑text and translation set a strong base, synthetic voices deliver initial reads, and human pros edit for rhythm, humor, and emotion. Directors pick takes, tune phrasing, and shape character dynamics. Linguists resolve idioms and regional slang that literal translation misses. Engineers lock mix specs per platform so tracks play clean everywhere.

A clear division of labor

- AI: transcription, first‑pass translation, draft voices, and alignment.

- Human: intent, tone, cultural fit, and final delivery choices.

- Shared: glossary building, pronunciation rules, and quality gates.

- Governance: consent, rights, and data security throughout the lifecycle.

- Metrics: turnaround, error rate, and audience retention by locale.

What Advantages Come from Combining AI and Human Voice Talent

The hybrid model of dubbing solution delivers speed and quality together. AI trims hours from transcription and timing, which shortens edit sessions. Voice actors elevate key scenes, handle sarcasm, and react to on‑screen beats with natural timing. Producers keep brand voice intact because people control phrasing and emphasis in tricky moments. Viewers enjoy credible characters and clear delivery, which lifts completion rates and shares.

Where hybrid shines most

- Fast‑moving news, education, and product explainers.

- Ongoing series with tight weekly schedules.

- Catalog localization for multi‑season shows.

- Global ad flights that need consistent brand voice.

- Social campaigns that refresh weekly or daily.

What Quality and Speed Gains to Expect from Hybrid Models

Teams often cut cycle time by half when they lean on AI for setup and alignment. Error rates drop with glossary enforcement and term validation across markets. Directors spend time on story beats rather than raw timing, which improves acting and flow. Mix engineers start from presets, then fine‑tune for scene dynamics, which keeps output consistent. Stakeholders review earlier in the process, so changes land before final mix and delivery.

Practical KPIs to track

- Turnaround per finished minute from ingest to master.

- Percentage of lines edited after first AI pass.

- Glossary adherence and pronunciation accuracy.

- Retention curves by locale across the first 30 seconds and the final minute.

- Cost per language per minute over time.

What Content Creators Gain from Hybrid Dubbing Services

Creators want reach, speed, and creative control. Hybrid dubbing services provide all three with a workflow that scales. A flexible stack supports single‑creator channels and studio networks alike. Teams ship more languages per budget, which opens markets that once felt out of reach. The same tools also help with accessibility because viewers can choose language, captions, and descriptive audio in one place. For revenue ideas tied to localization and enrichment, explore how metadata drives ROI in our blog on metadata and content monetization. For revenue ideas tied to localization and enrichment, explore how metadata drives ROI in our blog on metadata and content monetization.

What Types of Video Content Fit Hybrid Dubbing

Education platforms localize lesson libraries and onboard new regions with less friction. Entertainment teams adapt trailers, episodic content, and behind‑the‑scenes clips to fan communities worldwide. Brands dub product demos, help videos, and webinar highlights, which shortens sales cycles in new markets. Newsrooms and analysts translate on‑camera explainers and graphic‑driven features for fast syndication. Nonprofits and public agencies extend reach for safety messages and community updates. If you are mapping prerequisites for voiceovers, our primer on what transcription is clarifies how accurate text and timestamps feed clean dubs.

Format‑specific notes

- Tutorials and explainers benefit from glossary‑driven translation.

- Drama needs stronger human direction for subtext and humor.

- Animation tolerates more timing adjustments because lip shapes vary.

- Sports highlights require tight sync around play‑by‑play moments.

- Corporate training benefits from consistent narrator identity across modules.

What Impact Hybrid Dubbing Has on YouTube and Social Media Videos

YouTube channels grow international watch time when they post dubbed versions alongside the original. Shorts, Reels, and TikTok clips carry new languages without slowing the posting cadence. Creators test titles, descriptions, and thumbnails per region to match search and culture. Community captions and comments help refine term choices over time. Subscribers respond to consistent voices and familiar pacing, which boosts loyalty and session length.

Platform tactics that work

- Create separate playlists per language to help discovery.

- Post the dubbed cut within 24 to 72 hours of the original.

- Localize on‑screen text and lower thirds, not just the voice track.

- Add region‑specific CTAs for commerce and newsletters.

- Measure A/B tests on intros and outros by market.

What Time and Cost Savings Look Like with Hybrid Workflows

Hybrid workflows shorten setup and reduce the need for full studio time on every line. Editors start from aligned, translated scripts and synthetic takes, which speeds decision‑making. Directors schedule shorter actor sessions for the hardest scenes, not the entire program. Accounting sees a clearer unit cost per finished minute and can commit to more languages in the same budget. Over time, glossaries and pronunciation rules compound these gains.

Budget levers you control

- Reuse narrator voices for series and episodic runs.

- Standardize mix templates to cut mastering hours.

- Maintain a living glossary for terms, product names, and slang.

- Bundle regional releases to share setup costs.

- Track redo rates to find training and tooling gaps.

What Sets Hybrid Dubbing Apart from AI-Only and Traditional Services

The dubbing world now runs on three models: AI-only, traditional-only, and hybrid. AI-only moves fastest but can miss subtext, local idioms, and brand tone. Traditional-only delivers premium performances but requires longer timelines and higher costs. Hybrid combines automation for scale with human craft for emotion and context, which raises accuracy and audience trust without blowing budgets. The real choice centers on scale, timeline, and the emotional range your project requires.

- AI-only: fastest setup, risk of flat emotion and terminology drift.

- Traditional-only: strongest performance, higher cost and longer cycles.

- Hybrid: speed from AI plus human nuance, consistent quality across markets.

What Traditional Dubbing Still Does Well

Traditional sessions shine when a story hinges on subtle emotion and rapid turn‑taking. Experienced actors improvise within the director’s intent and react to micro‑beats on screen. Editors shape energy with frame‑level timing moves that sync laughs, gasps, and pauses. This craft delivers a premium track for tent‑pole releases, theatrical cuts, and prestige campaigns. Brands still pick full live dubbing for flagship spots and character‑driven narratives.

When to favor traditional sessions

- Feature films and high‑stakes drama.

- Complex ensemble scenes with overlapping dialogue.

- Comedic timing that relies on precise beats.

- Celebrity talent with recognizable deliveries.

- Launch trailers that set long‑term brand tone.

What AI Voice Technology Now Offers Creators

Voices generated with artificial intelligence now cover wide ranges of gender, age, and regional styles. Directors control pitch, speed, emphasis, and pause length to match on‑screen motion. Emotion controls add warmth, urgency, confidence, or calm as scenes demand. Alignment tools tighten syllables to reduce mismatch with lip movements. With strong direction, AI voices carry many formats without sounding robotic.

Guardrails for natural results

- Pick voices that fit character age and context.

- Use emotion presets sparingly, then fine‑tune with manual direction.

- Break long lines into shorter clauses to improve rhythm.

- Mix to human levels, not just loudness targets.

- Run audience checks with small focus groups before wide release.

What Voice Actors Contribute in a Hybrid Approach

Voice actors anchor the most important moments and set the creative bar for the project. They guide pronunciation choices, calibrate slang, and keep character arcs steady across episodes. Their takes serve as references for AI voices in less critical scenes. Actors and directors also handle any lines that demand improvisation or delicate timing. This collaboration keeps quality high without slowing the release plan.

Collaboration best practices

- Record reference performances for key scenes early.

- Let actors review AI takes and flag lines that need re‑cuts.

- Preserve actor notes inside the project hub for future seasons.

- Credit contributors clearly across regions.

- Share audience feedback loops with the cast and crew.

How AI-Human Collaboration Shapes the Future of Dubbing

Hybrid collaboration will push voice quality, translation accuracy, and real‑time sync even further. Teams will route more of the pipeline through secure clouds where AI handles volume and humans direct performance at key beats. Glossaries will update automatically based on viewer feedback and new product launches while reviewers fine‑tune phrasing by region. Directors will shape voices with finer control over breath, grit, laughter, and micro‑pauses, then sign off faster with shared dashboards. Global campaigns will feel native in each market without ballooning budgets. For a metadata‑first view of this future, see how autometadata transforms operations in our blog on autometadata in broadcasting. For a metadata‑first view of this future, see how autometadata transforms operations in our blog on autometadata in broadcasting.

What Innovations Are Coming in AI Voice Generation

Next‑gen models synthesize breaths, micro‑intonations, and expressive breaks that mirror human reads. Producers will fine‑tune voices on project‑level data, not just generic training sets. Real‑time voice direction will allow line reads to change on the fly during edit. Scene‑aware engines will read shot metadata and adjust intensity and pace to match camera movement. These upgrades will shrink the gap between synthetic and studio‑recorded tracks.

R&D areas to watch

- Cross‑lingual voice cloning with stronger accent control.

- Scene‑aware prosody that tracks cuts and motion.

- Context windows that keep character consistency across long arcs.

- Low‑latency synthesis for live translation and events.

- On‑device safeguards for rights and consent checks.

What AI Translation Accuracy Means for Global Audiences

Better translation improves trust, humor, and clarity across markets. Domain‑tuned engines will read glossaries and style guides to maintain brand voice. Editors will spend less time rewriting literal phrasing and more time perfecting performance. Regional variants will ship together, so Mexico, Spain, and the U.S. each get phrasing that fits. Viewers will stick around longer because the content sounds like it came from their market in the first place. To manage AI quality and governance, many teams reference the NIST AI Risk Management Framework for practical risk controls across the lifecycle.

Translation quality pillars

- Terminology control with enforceable glossaries.

- Style rules per brand, product line, and region.

- Named‑entity handling for people, places, and trademarks.

- Reviewer workflows with side‑by‑side source and target.

- Feedback loops from subtitles, comments, and support tickets.

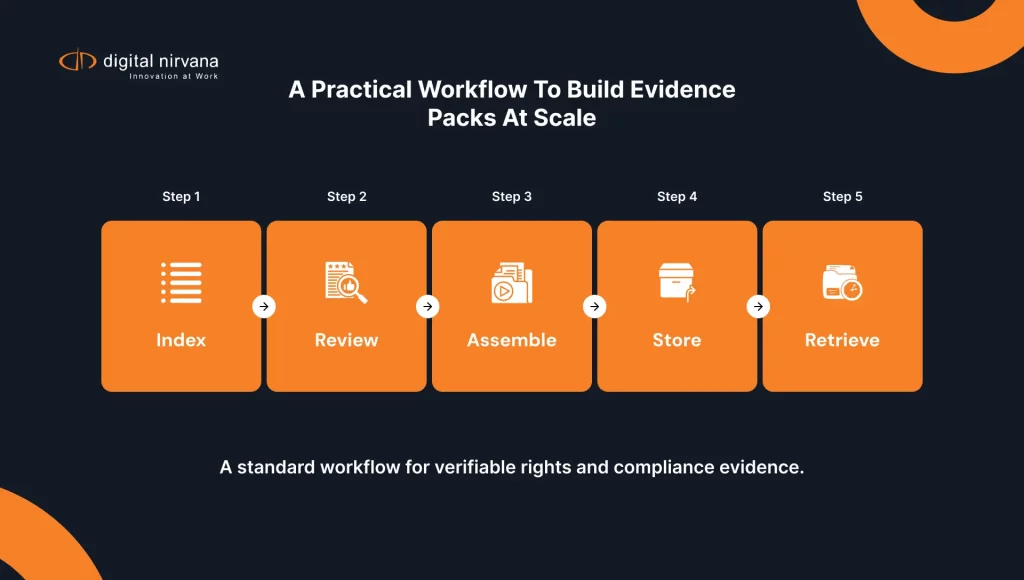

What Makes Hybrid Dubbing Scalable for International Campaigns

Scale comes from repeatable workflows, not one‑off heroics. Teams templatize ingest, approvals, and delivery so projects flow smoothly. A hybrid bench of actors, linguists, and editors handles spikes without chaos. Managers forecast language mix and volume so procurement lines up talent and compute ahead of time. Analytics show which languages drive watch time and revenue, which guides the next release.

Playbook for scale

- Standardize file naming, folder structure, and versioning.

- Centralize glossaries and pronunciation rules.

- Pre‑book actor time for peak weeks and launches.

- Automate QC checks for clicks, pops, and silence tails.

- Track release calendars across platforms and regions.

What to Consider Before Choosing a Hybrid Dubbing Partner

A strong decision looks beyond a demo reel. Evaluate the partner’s voice bench, linguist network, director experience, governance, SLAs, security, and integrations. Run a pilot on a full scene, not just a clip, with human review at key beats. Finance compares per‑minute pricing to blended rates that include direction and QC. Legal reviews consent, data handling, and rights across every market.

What Features Matter in a Hybrid Dubbing Service

Look for multi‑speaker diarization, domain‑tuned translation, and emotion‑aware TTS. Require glossary enforcement and pronunciation dictionaries that you can edit. Demand phoneme‑level alignment and timeline export for your NLE. Insist on mix presets for YouTube, OTT, broadcast, and radio. Ask for dashboards that track turnaround, redo rates, and quality at scale. For accessibility standards that affect many projects, review the FCC’s guidance on closed captioning quality standards as you plan voice and caption workflows.

Must‑have capabilities

- Script import and scene segmentation with timecodes.

- SSML controls for emphasis, pitch, and pauses.

- Batch processing for large catalogs.

- Collaboration with roles, notes, and approvals.

- Export formats that match your delivery specs.

What to Look for in Voice Quality and Emotion Accuracy

Quality starts with the right voice for the character or brand. Test voices on emotional peaks and quiet moments, not just neutral lines. Push for reads that carry subtext, humor, and sarcasm where the script calls for it. Compare takes on lip sync, breath placement, and natural phrasing. Involve actors and directors in the evaluation and lock choices that hold up across scenes.

A simple test plan

- Use a three‑scene reel that covers calm, conflict, and joy.

- Include a tongue‑twister line with brand names and jargon.

- Score lip sync, timing, and emotion on a clear rubric.

- Review results with both creative and technical leads.

- Keep a shortlist of voices per market and genre.

What Integration Options Exist with Your Current Workflow

Your hybrid dubbing partner should fit your edit, mix, and asset management stack. Look for plugins or APIs for Premiere Pro, Media Composer, Resolve, or your MAM. Demand simple import and export paths for captions, EDLs, and audio stems. Confirm SSO and role‑based access for producers, editors, actors, and reviewers. Ensure your cloud and storage policies keep project data safe across regions.

Integration checklist

- API endpoints for job submission and status.

- Webhooks for review, approval, and delivery events.

- Direct links to your captioning and compliance tools.

- Storage integrations for hot and cold tiers.

- Audit logs that trace every change to every line.

How Digital Nirvana Helps You Localize at Scale

At Digital Nirvana, we help you plan and ship multilingual versions with a hybrid model and predictable quality. Teams combine MetadataIQ for terminology control, TranceIQ for transcription, captions, and translation, and MediaServicesIQ for automation at scale. Producers fold the Subs and Dubs service into the edit to balance speed and nuance with a human‑in‑the‑loop model. Compliance teams rely on MonitorIQ for air‑check proof and audit trails. This stack shortens cycles, lifts accuracy, and gives your audience a better viewing experience in every market.

In summary…

A hybrid approach to AI dubbing delivers speed, quality, and scale without losing creative control. The right mix of automation and human craft turns one master into many native‑sounding versions on a predictable schedule. Use strong governance, repeatable workflows, and a clear playbook to protect rights and maintain standards as you grow.

- Benefits at a glance

- Faster turnarounds across more languages per budget.

- Consistent brand voice through glossaries and controlled voices.

- Higher watch time and conversions in each target market.

- Tighter lip sync and clearer delivery that feels native.

- Predictable costs with reusable voices and templates for convenience.

- Where hybrid fits best

- Ongoing series, education libraries, and product demos.

- Global ad flights and social campaigns with weekly refreshes.

- Large catalogs that need steady, reliable localization.

- Markets that require regional dialect and cultural nuance.

- Teams that value both speed and storytelling.

- How to start strong

- Pilot on one episode or module with two target languages.

- Build a glossary and pronunciation guide before you dub.

- Set KPIs for turnaround, error rate, and retention.

- Involve actors and directors on pivotal scenes.

- Lock a release calendar and measure outcomes per locale.

Digital Nirvana supports content creators, broadcasters, and brands with AI‑assisted localization workflows that respect creative intent and viewer time, thereby giving a boost in viewer engagement. Talk with our team, share your use case, and see how a hybrid model can expand reach while you keep quality high.

FAQs

What is the difference between AI dubbing and voice cloning?

AI dubbing covers the full process from transcription and translation to voice generation and mix. Voice cloning focuses on recreating a specific speaker’s voice for delivery, sometimes across languages. You can run AI dubbing without cloning when a neutral narrator voice suits the project. You can also pair cloning with AI dubbing when brand identity or character continuity matters. Both approaches work together when you want speed at scale and a familiar voice across regions.

Can AI dubbing match the emotional tone of human voices?

AI voices now carry a wide range of tones, from calm and warm to confident and urgent. Directors still guide emotion, pacing, and emphasis to match scene intent. For key moments, human actors set the gold standard and anchor the track. A hybrid plan assigns AI to straightforward lines and saves actor time for pivotal beats. With that mix, you protect emotion without slowing the schedule.

How does AI dubbing handle multiple languages?

A good stack uses domain‑tuned translation, enforceable glossaries, and region‑specific style guides. Editors test lines with native reviewers who understand slang, humor, and cultural cues. Teams build pronunciation dictionaries that keep names and product terms consistent. Release managers schedule region sets together so you ship Mexico, Spain, and the U.S. in one pass. This process scales to dozens of languages while you keep quality steady.

What content types are best suited for AI dubbing?

Education, explainers, news features, product demos, and corporate training gain fast from AI dubbing. Entertainment and drama also benefit, especially for trailers, recaps, and non‑peak scenes. Social clips and Shorts pick up new audiences when you post dubbed versions quickly. Live events and sports highlights can leverage low‑latency tools for near‑real‑time updates. Your pilot should reflect the mix you publish most, not just a one‑off clip.

Is AI dubbing suitable for broadcast‑quality video?

Yes, when you run a hybrid model and respect delivery standards. Engineers still master loudness and dynamics for OTT, broadcast, and radio. Directors still calibrate tone and pacing for each scene, and actors handle tough moments. QC tools catch clicks, pops, and timing slips before release. The final track meets spec and carries the story the way your audience expects.