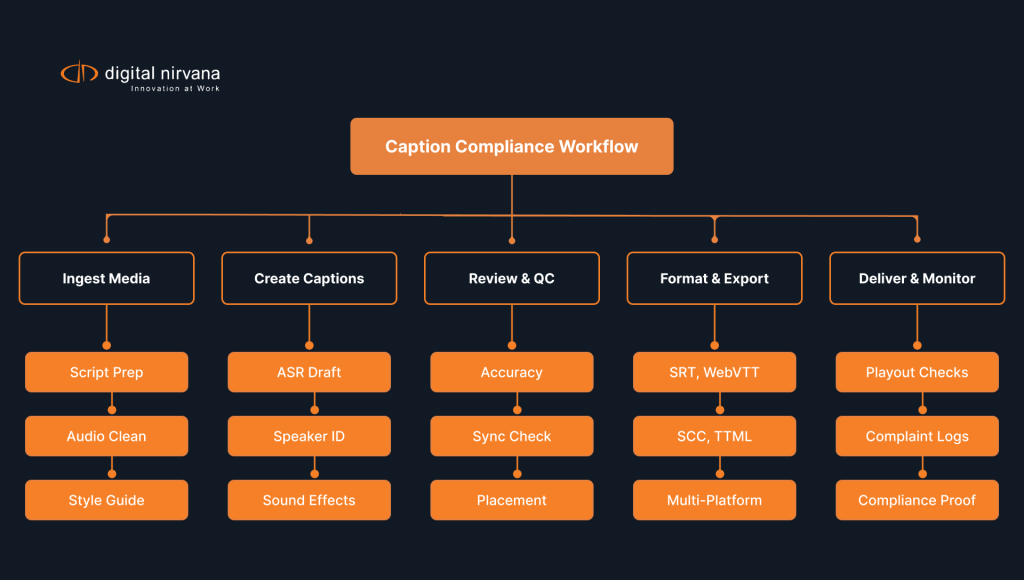

Closed captioning covers speech, music cues, and sound effects so viewers who cannot hear still follow the story. TV broadcasters must provide captions that stay accurate, synchronized, complete, and well placed on screen. Streaming platforms and social media apps expect caption files in common formats such as SRT, WebVTT, SCC, and TTML to keep playback smooth across devices. Open captions help creators reach global audiences on autoplay feeds where muted video rules. Teams that add captions early in production save time, control costs, and meet captioning laws without last-minute scrambles.

What Are the General Guidelines for Closed Captions

A strong captioning workflow protects access, reduces rework, and improves the viewing experience for everyone. Closed captions translate dialogue, identify multiple speakers, and describe key non-speech audio so the narrative makes sense without sound. Broadcasters, streamers, and content creators follow captioning guidelines that set quality bars for accuracy, timing, completeness, and placement. Your team can meet these bars with clear scripts, consistent speaker identification, and smart review cycles. When you treat captions as part of editorial, you deliver inclusive content that meets caption rules and audience expectations. You can also lean on Digital Nirvana’s closed captioning services for broadcast-ready output, and dig into styling tips in our blog on guidelines and fonts for closed captions.

What Makes Captions Accurate, Synchronized, and Complete

Accuracy means the words on screen match the spoken words, including slang, numbers, and proper nouns. Keep punctuation clean because commas, question marks, and ellipses shape meaning and pace for the viewer. Synchronize each caption with the exact audio moment so readers do not race ahead or lag behind the dialogue. Ensure completeness by covering all spoken content and critical sound effects, including music lyrics when they matter to plot or tone. Build a review step where a human editor checks automatic output, fixes misheard words, and confirms timing at scene changes.

What Rules Apply for Speaker Identification and Sound Effects

Clear speaker identification removes confusion, especially in scenes with multiple speakers or off-camera voices. Use labels such as “John:” or “Narrator:” at the start of a caption block when the voice is not obvious from the visuals. Mark sound effects in brackets, like “[door slams]” or “[phone ringing],” and keep descriptions short and factual. Describe music cues that carry meaning, such as “[somber music]” or a lyric line that advances the story. Maintain consistency across the program so viewers learn and trust your style choices from the first minute.

What File Formats Are Standard for Caption Delivery

Most platforms accept a few standard file formats, so choose the one that fits your toolchain and destination. SRT offers simplicity and broad support for streaming and social media uploads. WebVTT suits HTML5 playback and interactive environments where styling and positioning matter. SCC or MCC serve broadcast workflows that need precise frame count alignment with captioning rules for TV. TTML and IMSC work well for large, global libraries where interchange across services matters more than lightweight editing.

How Digital Nirvana Helps You Meet Caption Rules

Our services at Digital Nirvana blend automation with human expertise so you stay compliant and keep quality high. Producers create and polish captions in TranceIQ, then export WebVTT, SRT, SCC, or TTML straight to delivery. If you need a done-for-you option, our closed captioning services return human-verified files that match house style and FCC expectations. Teams that want translation, SDH, or transcripts can route work through our media enrichment solutions without juggling vendors. Engineering and compliance teams can validate air and OTT feeds using MonitorIQ to close the loop from creation to playout.

What Kinds of Captions Are Used Across Platforms

Different projects call for different caption types, and your distribution plan should guide that call. Closed captions allow the viewer to turn captions on or off through the player, which fits TV standards and many streaming apps. Open captions burn the text into the video so every viewer sees it, which helps in social media feeds where videos autoplay with the sound off. Subtitles present translated dialogue for multilingual content but usually skip non-speech audio, while SDH subtitles add sound effects and identification. Your mix may include closed captions for TV, open captions for social media teasers, and subtitles for international versions of the same cut. For more context, see our explainer on types of closed captions and this comparison of subtitles vs. closed captioning.

What Closed Captions Offer for Accessibility Compliance

Closed captions support compliance with captioning laws and open content to people who are deaf or hard of hearing. Users control their viewing experience because the player provides an on or off toggle for closed captions. Broadcasters often rely on EIA-608 or EIA-708 captions for legacy and HD feeds, then convert to WebVTT or SRT for over-the-top delivery. This flexibility helps one master caption file feed many distribution paths without recreating work. Closed captioning also improves search and discovery when platforms index caption text for recommendations and watch-next lists.

What Open Captions Mean for Streaming and Social Media

Open captions embed text in the picture, so the viewer sees captions even when the player offers no CC button. This approach shines on social media where users scroll with the sound muted and still need to follow the story. Open captions also reduce support tickets on platforms with inconsistent CC toggles because the text always appears. You should design open captions with strong contrast, safe positioning, and clear font choices since users cannot change styling. For long-form assets, you can pair open captions on trailers and clips with closed captions on full episodes to balance reach and control.

What Role Subtitles Play in Multilingual Content

Subtitles translate speech for viewers who understand the visuals but not the original language. For global releases, create a subtitle file for each target language and match timing with the original dialogue. When a show uses meaningful sound effects, consider SDH subtitles so international viewers get the full context. Keep line length tight so readers can scan while watching action heavy scenes without losing plot. Store subtitles as text, not as baked-in graphics, so you can update translations quickly when scripts change.

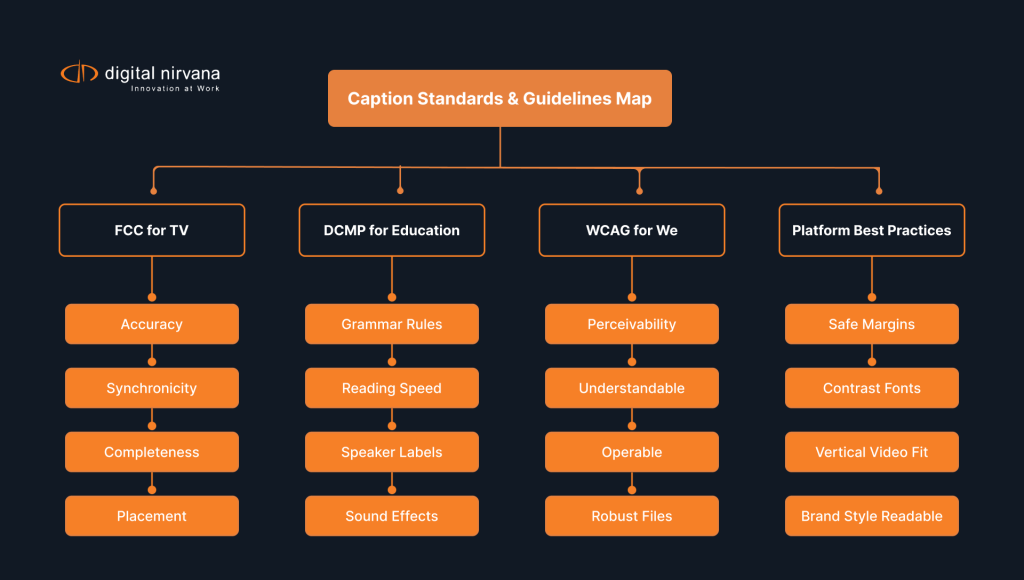

What the FCC Captioning Guidelines Require for TV Broadcasters

The FCC sets baseline captioning rules for TV that cover quality, timing, and responsibility. Broadcasters confirm that all new, non-exempt English and Spanish programs carry closed captions that meet accuracy, synchronicity, completeness, and placement standards. Stations keep records that show how they handle viewer complaints and how they maintain caption quality across vendors. Networks that simulcast to apps often carry these standards into streaming to keep a consistent viewer experience. When legal teams, engineering, and editorial coordinate, the operation avoids fines and protects audience trust. For reference, review the FCC’s summary of closed captioning rules for television.

What the Four Key FCC Standards Include

Accuracy requires correct words, spellings, and punctuation that reflect the content’s tone and intent. Synchronicity demands that captions start and end with the audio, including quick exchanges and overlaps. Completeness covers the entire program from the first spoken word to the last, including sponsor tags and promos inside the show. Placement keeps captions from blocking faces, graphics, or critical on-screen text and allows repositioning during lower third animations. A captioning workflow that tracks these standards through ingest, edit, QC, and transmission keeps teams aligned and accountable.

What Penalties Apply for Non-Compliance with Captioning Laws

Regulators can impose fines, order corrective action, and require reporting when broadcasters ignore caption rules. A station that fails to fix recurring problems after viewer complaints may face stronger enforcement. Leadership teams reduce risk when they vet vendors, define service levels for live and recorded captioning, and audit performance. Legal and ops teams also train staff on response timelines so complaints get logged, answered, and resolved on record. Proactive investment in quality controls costs less than a consent decree, audience loss, and brand damage.

What Content Must Be Captioned Under FCC Rules

Most new, non-exempt television programming must carry closed captioning, including prime time series, news magazines, and live sports. Pre-recorded shows require high accuracy and clean placement because editors can polish timing before air. Live programs rely on trained stenographers or re-speakers, plus delay buffers that allow quick corrections during breaks. Clips and highlights that aired on TV with captions need captions when posted online under IP closed captioning rules. Reruns and syndicated packages also keep captions intact to meet both compliance and viewer expectations.

What the DCMP Captioning Guidelines Recommend for Educational Media

The DCMP guidelines shape caption quality for schools, libraries, and educational streaming platforms. Education audiences include early readers, English learners, and students who use captions as literacy support, so clarity matters. DCMP guidance sets specific expectations for grammar, punctuation, reading speed, and how to present speaker changes. Teams that follow these rules see higher comprehension and lower cognitive load in classrooms. When your program targets education buyers, align with DCMP to meet procurement checklists and learning outcomes.

What Standards Cover Grammar, Punctuation, and Presentation

Write captions with standard grammar and punctuation so readers focus on meaning, not on decoding. Use sentence case rather than all caps unless style guides or accessibility needs require otherwise. Keep line length short enough to scan quickly, and keep two-line blocks balanced to avoid a heavy second line. Place captions near the speaker when supported and away from on-screen text so viewers absorb both. Maintain a steady reading rate that suits the audience and the content’s energy without rushing readers.

What to Do for Captioning Content With Multiple Speakers

Multiple speakers raise the stakes for clarity because quick turn taking can confuse readers. Use labels or distinct hyphens to mark speaker changes when the video does not show the speaker clearly. Introduce a speaker label the first time a new off-screen voice appears, then repeat only when needed. When a panel crosses talk, split the captions into separate lines inside the same timecode window to show overlap. In transcripts and downloadable subtitle files, keep the same labels so educators can use the text for assignments.

What the DCMP Says About Describing Sound Effects Clearly

Students rely on explicit descriptions of sound effects to understand cause and effect in lessons and documentaries. Use short, concrete words like “[thunder rumbles]” or “[crowd cheers]” rather than vague terms. Describe sounds only when they matter to meaning or mood, and avoid over-captioning that clutters the screen. Identify music by style or lyric when relevant to learning, such as “[jazz piano intro]” in a history clip. Align the description’s timing with the moment of impact so learners connect the text with the visual cue.

What WCAG Guidelines Say About Captioning for Web Content

WCAG sets the bar for web accessibility that covers video players, streaming apps, and social media embeds. The guidelines expect captions for prerecorded audio content and call for alternatives for live audio where feasible. Developers and content owners use WCAG success criteria to remove barriers in perceivability, operability, understandability, and robustness. When you meet WCAG for captions, your site serves more users and reduces legal exposure. Many legal teams treat WCAG 2.1 and 2.2 AA as the practical target for video accessibility today.

What WCAG Requires for Audio and Video Accessibility

Provide captions for prerecorded video with audio and consider live captions for live streams when resources allow. Offer transcripts where they add value for study, search, or compliance with internal documentation rules. Ensure player controls for turning captions on and off work with keyboard and screen readers. Make caption files available in common formats such as WebVTT and SRT so assistive tech and archives can use them. Test your pages with real users who rely on captions to confirm that design choices support comprehension.

What Makes Captions Perceivable and Understandable Online

Perceivability starts with contrast and legibility, so choose fonts and sizes that read well on mobile and TV. Keep a consistent placement that avoids covering faces or lower third graphics, and allow repositioning when the player supports it. Pace matters, so match reading speed to the material, and break long sentences into shorter, scannable lines. Use punctuation and paragraph breaks to show pauses, tone, and emotional beats without over-explaining. When you produce interactive video or branching stories, ensure caption timing updates correctly as viewers jump between segments.

What Applies to Captioning on Social Media Platforms

Social media thrives on muted autoplay, so captions carry the story for scrollers who never turn on sound. Upload a clean subtitle file for each platform that supports it, and use open captions when a platform lacks robust CC features. Keep safe margins for vertical video so text stays clear on tall aspect ratios and small screens. Brand your open captions with subtle design that reinforces identity without hurting readability or accessibility. Track watch time and completion rates with and without captions to prove ROI and adjust style choices. For practical government guidance on captions and transcripts, see Section 508’s primer on creating captions and transcripts.

What Best Practices Improve the Captioning Experience

Great captions feel invisible because they match the video’s rhythm and never block the action. Start with clean audio because noise and echo hurt both human and AI captioning accuracy. Build style guides that define fonts, placement, maximum characters per line, and speaker identification rules. Train editors and producers to flag sequences that need special attention such as rapid-fire dialogue, foreign terms, or heavy sound effects. Review caption files on the same devices your audience uses, not just in the edit suite.

What to Consider for Font Style, Size, and Positioning

Pick a sans serif font with clear letterforms that hold up on phone screens and living room TVs. Set a size that reads at arm’s length on mobile while staying subtle on large displays. Use a drop shadow or semi-opaque box for contrast when background footage gets busy. Position captions inside safe title areas and adjust placement during lower thirds, credits, or on-screen text so nothing collides. Keep line breaks natural at phrase boundaries to support breath and rhythm while reading.

What Makes Captions Readable Without Distraction

Readers process text faster when you avoid clutter, so skip extra symbols or decorative flourishes. Use consistent speaker identification and keep sound effect descriptions short and purposeful. Limit each caption to two lines when possible and keep characters per line within a comfortable range. Time captions so each line appears long enough to read without strain yet moves with the scene’s pace. Run a readability pass during QC where an editor watches without audio to test the flow from start to finish.

What Matters in Creating Captions for Live vs. Pre-Recorded Content

Live content needs trained captioners, clear audio feeds, and backup plans for power or network hiccups. Build a delay buffer that allows quick corrections during breaks or timeouts in sports and live events. Pre-recorded content allows fine tuning, so invest time in timing, punctuation, and placement during edit review. For both cases, align on service levels for accuracy, latency, and outage handling, then monitor results against those metrics. Document lessons after each live event so your next broadcast benefits from real data and team feedback.

What Role AI Plays in Modern Captioning Services

AI in captioning speeds up delivery, cuts repetitive work, and helps teams scale catalogs without sacrificing quality. Automatic speech recognition can draft captions in minutes, and newer models handle multiple speakers, accents, and domain terms better than before. Voice activity detection, speaker diarization, and model prompts help your tools track who speaks and when. AI translation expands reach by creating subtitle files for multilingual distribution across streaming apps and social media. You still control outcomes by training models with your style, glossary, and approved file formats. For a practical walkthrough of AI-assisted workflows, read our blog on the AI advantage in transcription, captioning, and translation.

What AI Tools Can Do in Auto-Generating Captions

AI tools convert speech to text, split text into caption blocks, and align blocks to timecodes. Many systems detect multiple speakers and add labels that editors can confirm during review. Domain adaptation lets the engine learn show names, character names, and product terms so accuracy rises. Integration with NLEs and MAMs allows editors to add captions as part of normal timelines rather than as a last step. With AI video dubbing and ai voice models on the rise, the same pipeline can also support voice cloning and translated audio tracks.

What Benefits AI Brings in Speed, Cost, and Scalability

AI reduces turnaround times from days to hours, which keeps publishing schedules tight for marketing videos and episodic drops. Lower per-minute costs allow teams to caption long back catalogs that would sit idle without automation. At scale, AI-driven captioning services handle peaks after a season launch, a live event, or a campaign blast. Operations leaders like predictable costs, and editors like spending time on nuance rather than on repetitive typing. Viewers benefit because more videos ship with closed captions and subtitles right when they want to watch.

What Human Oversight Still Adds to AI-Driven Captioning

Human editors add judgment that machines still miss in tone, sarcasm, and cultural references. They correct homophones, localize idioms, and ensure speaker identification stays coherent from scene to scene. Editors also polish punctuation and line breaks so reading feels natural, not mechanical. Compliance specialists confirm that files meet captioning laws, house style, and platform specs such as safe regions and filename rules. The best results come from hybrid workflows where AI handles first pass creation and humans handle final review.

Why Teams Choose Digital Nirvana for Captioning Compliance

Our services at Digital Nirvana combine software and services so your team meets caption rules without extra headcount. Editors work inside TranceIQ to create, edit, and export WebVTT, SRT, SCC, TTML, and more with broadcast timing. Compliance teams verify playout with MonitorIQ while operations route caption files through your MAM or PAM. For turnkey work, our closed captioning services deliver human-reviewed files that align with FCC, DCMP, and WCAG guidance. When you need translation or SDH variants, our media enrichment solutions cover subtitles, transcripts, and multilingual deliverables.

In summary…

A short recap helps teams turn policy into daily habits that improve the viewing experience and keep content compliant. Use these bullets to guide planning, budgeting, and quality control across TV, streaming, and social media.

- Quality standards to hit: Ensure accuracy, synchronicity, completeness, and placement on every program.

- Speaker and effects clarity: Label multiple speakers when needed and describe sound effects that matter to meaning.

- Formats to trust: Keep clean masters in SRT, WebVTT, SCC, or TTML so you can deliver to any platform.

- Platform fit: Use closed captions for control, open captions for noisy feeds, and subtitles for multilingual reach.

- Regulatory guardrails: Follow FCC rules for TV, DCMP practices for education, and WCAG AA for web and apps.

- Design choices: Set readable fonts, strong contrast, and safe positioning that adapts to on-screen elements.

- Workflow reality: Plan different approaches for live versus pre-recorded content and record what works.

- AI with oversight: Let ai tools draft, then let humans refine for tone, accuracy, and legal compliance.

Wrap up the plan by assigning owners for ingest, creating captions, QC, and delivery so accountability stays clear. Set success metrics such as caption error rates, on-time delivery, and viewer satisfaction. Review monthly and adjust styles or vendors when data shows friction. When you treat captions like core editorial, your programs reach a wider audience and meet caption rules without drama. For teams that want a partner, Digital Nirvana supports closed captioning, metadata, and compliance services so your staff can focus on storytelling.

FAQs

What is the difference between closed captions and subtitles?

Closed captions serve viewers who need both dialogue and sound effects described for full comprehension. Subtitles present translated dialogue for viewers who hear the audio but prefer another language, and they usually skip non-speech audio. Many platforms also offer SDH subtitles that add speaker identification and key sound cues for accessibility. Use closed captions on TV and streaming when you must meet captioning laws and provide a toggle in the player. Use subtitles to localize content for global audiences while keeping separate files per language for easy updates.

What captioning rules apply to YouTube and social media videos?

YouTube supports closed captions through subtitle file uploads such as SRT and WebVTT, and creators can edit timing in Studio. The platform also allows auto captions, but you improve accuracy by reviewing and correcting them before publishing. Other social media apps vary, so creators often attach open captions to guarantee readability on muted autoplay feeds. Keep brand style simple to maintain readability and keep safe margins for vertical formats such as 9 by 16. Track performance with and without captions to show how captions lift completion rates and watch time.

What are the captioning requirements for live broadcasts?

Live TV and live streams need captions that appear with minimal delay so viewers can follow in real time. Broadcasters hire trained stenographers, re-speakers, or qualified vendors and set service levels for latency and error rate. Engineering teams route clean audio and provide glossaries so captioners keep names, places, and technical terms consistent. Producers plan correction windows during breaks and issue post-event fixes for VOD versions that will live online. Treat live captioning as a specialized skill that requires backups, testing, and clear roles before each show.

What makes captions compliant under FCC guidelines?

Compliant captions hit four goals at once: accuracy, synchronicity, completeness, and proper placement that avoids covering important visuals. Stations document their process, answer complaints on time, and audit vendor performance. Editors time captions to match fast dialogue, check spelling of names, and fix punctuation that affects meaning. Engineers confirm files travel cleanly through transcode and playout so frame timing stays intact. When every team member owns a slice of these steps, the whole operation meets captioning laws and keeps viewers satisfied.

What AI tools are used in captioning services today?

Teams use automatic speech recognition for first drafts, diarization for speaker identification, and translation engines for multilingual subtitle files. Quality rises when you train models with show terms, product names, and character lists from your scripts. Editors review output in NLEs, correct misheard words, and refine punctuation and line breaks. Delivery systems export WebVTT, SRT, or TTML so platforms can add captions or burn in open captions for social media. Hybrid AI plus human review keeps speed and quality in balance across broadcast, streaming, and ai video workflows.