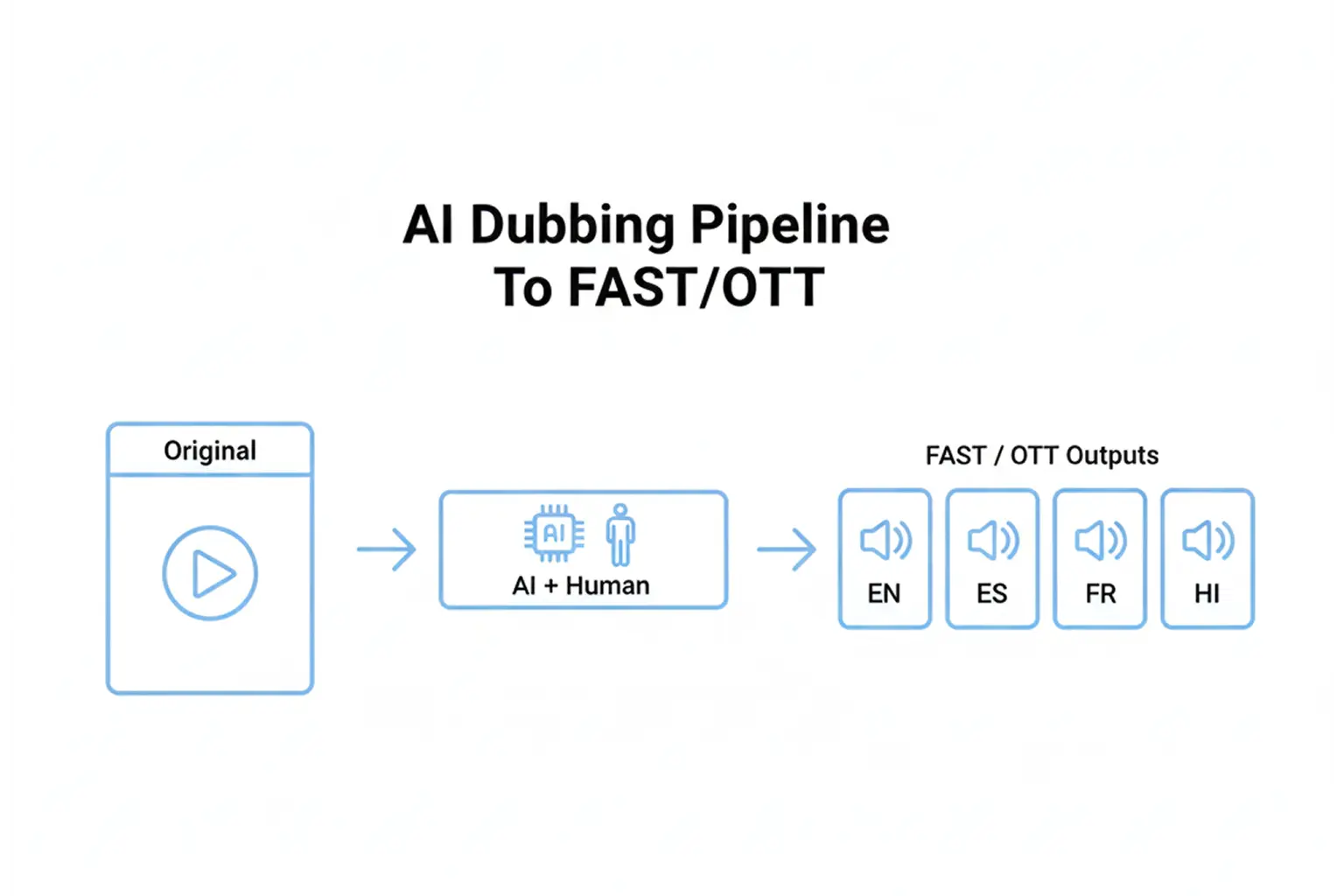

You can localize a show into five languages in either days or weeks. The difference is not just AI. It is the workflow around it.

Broadcasters moving into FAST and OTT have a clear mandate: ship more localized content, faster, without lowering quality or creating delivery rejections. AI dubbing helps, but only when it is paired with the right script process, translation controls, voice strategy, and QC gates. Hybrid approaches that combine automation with human review are widely used because they balance speed with accuracy and cultural nuance.

This guide walks through an end-to-end AI dubbing workflow designed for broadcast operations, with deliverables that are FAST and OTT-ready.

What Makes AI Dubbing Broadcast-Ready

Broadcast-ready dubbing is not just understandable speech in another language. It is a deliverable that meets three needs at the same time:

- Editorial quality: translation fits the scene, sounds natural, and respects tone and intent.

- Technical stability: audio is clean, mixed correctly, loudness-compliant, and stays in sync during long-form playback.

- Distribution readiness: packaging supports multi-audio and subtitles across FAST and OTT endpoints without surprises.

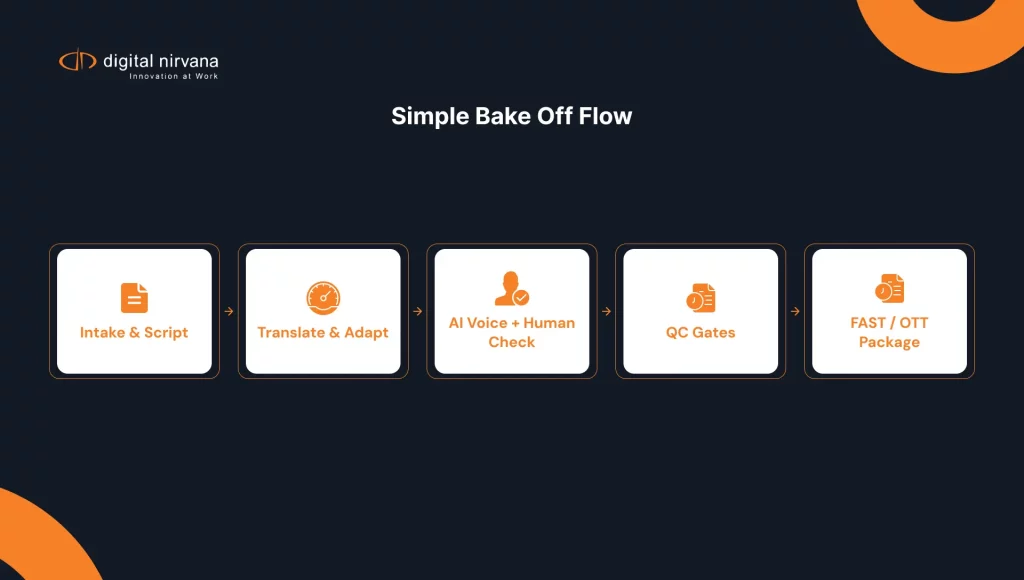

Digital Nirvana positions Subs and Dubs as a hybrid approach that combines AI with human-in-the-loop review to produce natural-sounding results at scale.

Workflow Stage 1: Intake And Script Prep

Define The Target Deliverable Before You Start

Before translation begins, lock these decisions:

- Target languages and rollout order

- Dubbing style: full dub versus voiceover style, and whether original ambience remains audible

- Required deliverables: audio-only tracks, subtitles, captions, transcripts, and metadata handoff

- Target platforms: FAST partner specs and OTT player requirements

This prevents the most common rework loop: finishing a dub, then discovering you needed different timing, different deliverable formats, or different mixing rules.

Create A Script Package That AI And Humans Can Both Use

A broadcast-friendly script package typically includes:

- Dialogue segmentation by speaker and scene

- Reference time ranges per line for timing and QC

- Pronunciation notes for names, locations, and branded terms

- A terminology list that must stay consistent across episodes and seasons

For news and sports libraries, this terminology list matters more than most teams expect. Names, teams, venues, and sponsor phrases have to be consistent across packages, clips, and highlight cuts.

Workflow Stage 2: Translation And Adaptation

Translate For Meaning, Then Adapt For Timing

Dubbing translation is not just “correct words.” It must fit timing and pauses so the resulting track feels aligned to the visuals. Research on dubbing translation highlights timing structure as part of what makes a dub feel coherent.

A practical approach is two-pass:

- Pass one: faithful translation for meaning and terminology

- Pass two: adaptation for isochrony, pacing, and natural phrasing

Use Human Review Where It Protects Brand And Context

Hybrid dubbing guidance consistently emphasizes that human review helps ensure cultural fit, accurate intent, and fewer embarrassing errors, especially when content includes idioms, humor, or sensitive topics.

In broadcast reality, human review is also where you catch:

- Misread proper nouns, especially in sports and politics

- Incorrect honorifics or formal registers

- Region-specific phrasing that affects audience trust

Workflow Stage 3: Voice And Performance Strategy

Choose The Right Voice Model Strategy For Your Content

Broadcasters usually land in one of these models:

- Series voice continuity: consistent voice for recurring characters or hosts

- Program-based voicing: one voice set per show brand

- Segment-based voicing: lighter approach for clips, promos, or short-form

The voice decision should align with the audience’s promise. If you are launching a FAST channel, consistency across episodes can matter as much as speed.

Preserve What Viewers Feel, Not Just What They Hear

A strong dub carries emphasis, emotion, and intent, not just literal meaning. Large-scale studies of professional dubbing suggest that vocal naturalness and translation quality can matter more than strict lip-sync perfection in many cases.

For broadcasters, this is a useful rule of thumb:

- Prioritize naturalness and clarity for documentaries, reality, news explainers, and sports analysis

- Tighten sync and performance direction for drama, comedy, and character-driven shows

Workflow Stage 4: QC That Catches Real Failures

If you want FAST and OTT-ready dubs, QC needs both editorial checks and technical checks. There are emerging rubrics specifically aimed at assessing dubbed and voice-over quality across script adaptation and output quality, including AI-generated dubs.

QC Gate 1: Language And Meaning

Check:

- Terminology accuracy and consistency

- Names and pronunciation guidance followed

- Tone matches the scene: serious, playful, urgent, formal

- No invented facts, especially in news content

QC Gate 2: Timing And Sync

Check:

- Line timing feels natural relative to scene changes

- No consistent early or late drift across long segments

- Pauses feel intentional, not like a machine reading text

QC Gate 3: Audio Quality And Mix

Check:

- No clipping, pops, or artifacts

- Music and effects are not unintentionally masked

- Dialogue intelligibility is stable across devices

For broadcast loudness targets, many operations reference standards like EBU R128 (including the -23 LUFS target) and ATSC A/85 guidance, depending on region and distribution.

QC Gate 4: Compliance And Accessibility Readiness

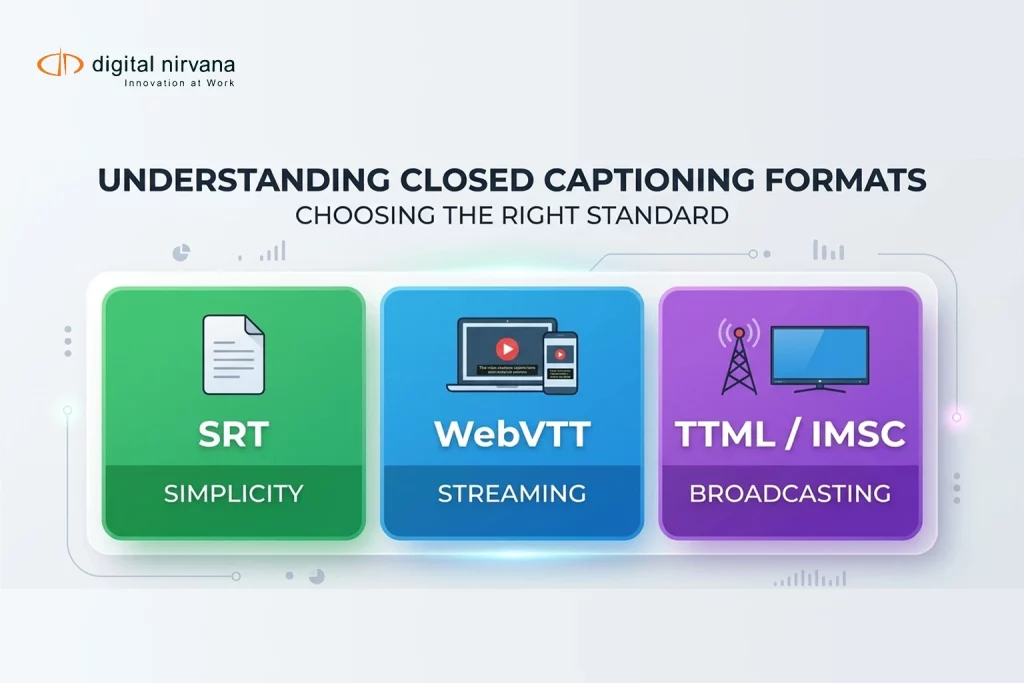

Even when dubbing is the focus, subtitles and captions often ship alongside it for accessibility and platform support. IMSC profiles are designed specifically for subtitle and caption delivery worldwide.

Workflow Stage 5: Distribution Packaging For FAST And OTT

This is where “good dubbing” becomes “shippable dubbing.”

Multi-Audio Tracks Need Clean Rendition Metadata

FAST and OTT platforms commonly expect multi-audio support with correctly declared language renditions. In HLS ecosystems, alternate audio renditions are represented via EXT-X-MEDIA tags and must be defined consistently for selection and playback.

Practical packaging checks:

- Language codes are correct and consistent

- Track names are user-friendly, not internal codes

- Default and fallback behavior are defined per market

Subtitle And Caption Formats Must Match The Platform Reality

Many streaming workflows rely on formats like TTML and IMSC for broad compatibility, with clear guidance that IMSC improves future compatibility in streaming contexts.

FAST-Specific Watchouts

FAST can feel linear, but it behaves like OTT. That means you should validate:

- Long-play stability, no gradual sync drift over time

- Ad-break transitions and return-to-content behavior

- Consistency across partner ingest and re-encode points

Implementation Checklist

- Define target languages, rollout order, and platform deliverables up front

- Build a script package with segmentation, time ranges, and terminology controls

- Use two-pass translation: meaning first, then timing adaptation

- Select a voice strategy that supports your channel brand and content type

- Run QC in gates: language, timing, audio, and compliance

- Package multi-audio correctly and validate playback selection

- Deliver subtitles and captions in platform-accepted formats like IMSC, where required

For teams looking to operationalize this with a hybrid, broadcaster-friendly service model, Digital Nirvana’s Subs and Dubs is built on AI acceleration and human review for quality and natural results.

FAQs

AI dubbing uses automation to accelerate translation and voice generation, enabling localized audio tracks to be produced faster than with traditional pipelines. Many broadcasters use hybrid approaches that combine human review with automation to maintain accuracy and cultural fit.

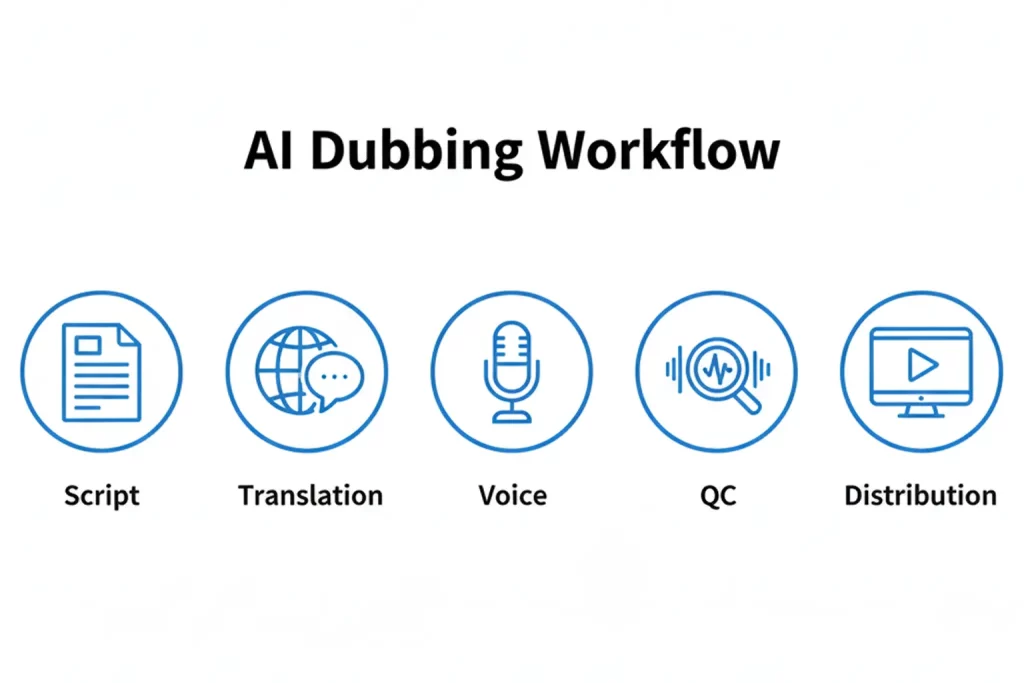

An AI dubbing workflow is the end-to-end process that turns a source program into a localized deliverable, including script prep, translation and adaptation, voice generation, QC, and packaging for distribution.

Use a structured rubric across language accuracy, timing and sync, audio quality, and platform compliance. Quality assessment models for dubs and voice-overs emphasize evaluating both adaptation and output quality, which aligns well with gated QC.

They often require properly labeled multi-audio renditions and compatible subtitle formats. HLS workflows rely on declared alternate audio renditions, and IMSC profiles are intended for subtitle and caption delivery worldwide.

Often yes, especially for names, context, tone, and culture. Human-in-the-loop approaches are commonly recommended to reduce quality degradation and ensure the dub lands correctly for the audience.

Conclusion

AI dubbing becomes a competitive advantage when it is run as a repeatable broadcast workflow rather than a one-off experiment. If you lock the deliverable early, control terminology, adapt translations for timing, and use gated QC before packaging, you can scale languages without sacrificing viewer trust or platform acceptance.

Key Takeaways:

- Treat AI dubbing as an operations pipeline: script package, translation and adaptation, voice, QC, then distribution.

- Use hybrid review to protect meaning, tone, and cultural fit, especially when dealing with news and sports terminology.

- QC must include timing, audio quality, and loudness targets, not only translation correctness.

- FAST and OTT readiness depend on proper multi-audio packaging and subtitle standards, such as IMSC, where required.

- If you want a broadcaster-focused hybrid delivery model, consider a service built for AI speed with human-in-the-loop quality, such as Digital Nirvana’s Subs and Dubs.